diff --git a/.github/ISSUE_TEMPLATE/bug_report.md b/.github/ISSUE_TEMPLATE/bug_report.md

new file mode 100644

index 00000000..0f04a246

--- /dev/null

+++ b/.github/ISSUE_TEMPLATE/bug_report.md

@@ -0,0 +1,23 @@

+---

+name: Bug report

+about: Create a report to help us improve

+title: ''

+labels: ''

+assignees: ''

+

+---

+

+**需知**

+

+升级feapder,保证feapder是最新版,若BUG仍然存在,则详细描述问题

+> pip install --upgrade feapder

+

+**问题**

+

+**截图**

+

+**代码**

+

+```python

+

+```

diff --git a/.github/ISSUE_TEMPLATE/config.yml b/.github/ISSUE_TEMPLATE/config.yml

new file mode 100644

index 00000000..9ab3c9b8

--- /dev/null

+++ b/.github/ISSUE_TEMPLATE/config.yml

@@ -0,0 +1,6 @@

+# https://docs.github.com/en/github/building-a-strong-community/configuring-issue-templates-for-your-repository#configuring-the-template-chooser

+blank_issues_allowed: false # We have a blank template which assigns labels

+contact_links:

+ - name: Questions about using feapder?

+ url: "https://github.com/Boris-code/feapder/discussions"

+ about: Please see our guide on how to ask questions

\ No newline at end of file

diff --git a/.github/ISSUE_TEMPLATE/feature_request.md b/.github/ISSUE_TEMPLATE/feature_request.md

new file mode 100644

index 00000000..bbcbbe7d

--- /dev/null

+++ b/.github/ISSUE_TEMPLATE/feature_request.md

@@ -0,0 +1,20 @@

+---

+name: Feature request

+about: Suggest an idea for this project

+title: ''

+labels: ''

+assignees: ''

+

+---

+

+**Is your feature request related to a problem? Please describe.**

+A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

+

+**Describe the solution you'd like**

+A clear and concise description of what you want to happen.

+

+**Describe alternatives you've considered**

+A clear and concise description of any alternative solutions or features you've considered.

+

+**Additional context**

+Add any other context or screenshots about the feature request here.

diff --git a/.github/workflows/workflow.yml b/.github/workflows/workflow.yml

new file mode 100644

index 00000000..e69de29b

diff --git a/.gitignore b/.gitignore

index d6f90b5c..fedead23 100644

--- a/.gitignore

+++ b/.gitignore

@@ -14,4 +14,5 @@ dist/

.vscode/

media/

.MWebMetaData/

-push.sh

\ No newline at end of file

+push.sh

+assets/

\ No newline at end of file

diff --git a/CONTRIBUTING.md b/CONTRIBUTING.md

new file mode 100644

index 00000000..63d42cb0

--- /dev/null

+++ b/CONTRIBUTING.md

@@ -0,0 +1,15 @@

+# 贡献指南

+感谢你的宝贵时间。你的贡献将使这个项目变得更好!在提交贡献之前,请务必花点时间阅读下面的入门指南。

+

+## 提交 Pull Request

+1. Fork [此仓库](https://github.com/Boris-code/feapder.git),

+2. clone到本地,从 `develop` 创建分支,对代码进行更改。

+3. 请确保进行了相应的测试。

+4. 推送代码到自己Fork的仓库中。

+5. 在Fork的仓库中点击 Pull request 链接

+6. 点击「New pull request」按钮。

+7. 填写提交说明后,「Create pull request」。提交到`develop`分支。

+

+## License

+

+[MIT](./LICENSE)

diff --git a/README.md b/README.md

index 80dffe49..7bde6250 100644

--- a/README.md

+++ b/README.md

@@ -8,48 +8,25 @@

[](https://pepy.tech/project/feapder)

[](https://pepy.tech/project/feapder)

-

- -

-

## 简介

-**feapder是一款上手简单,功能强大的Python爬虫框架**

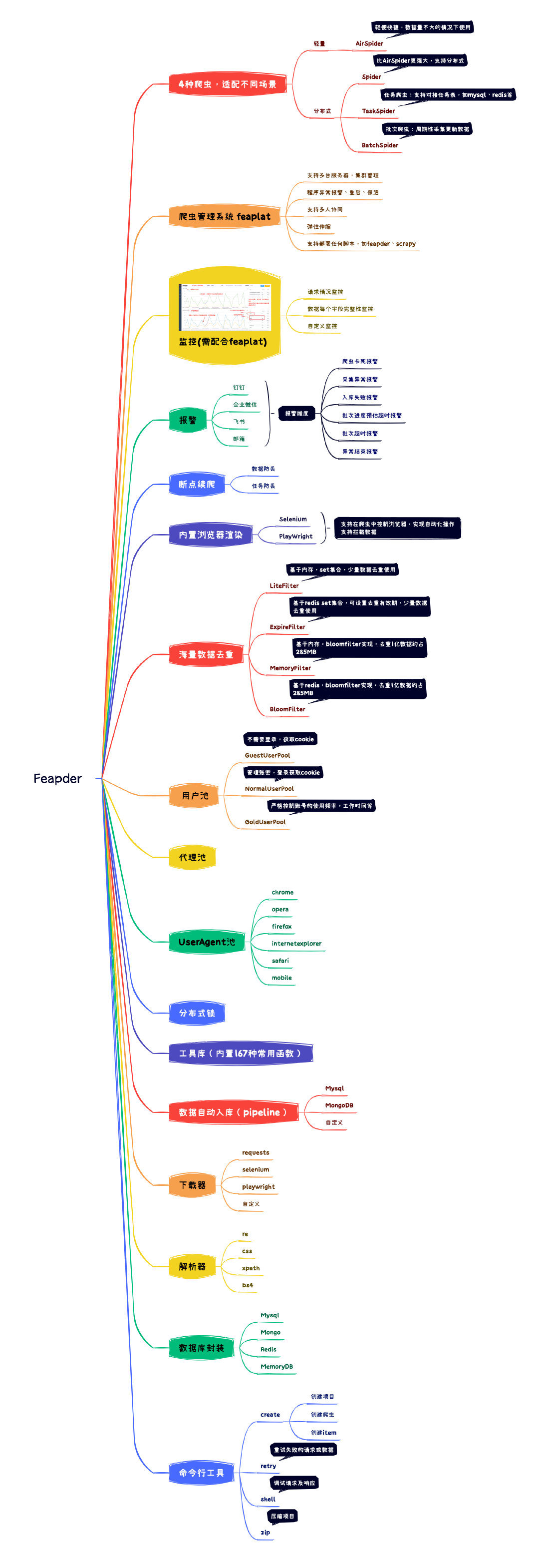

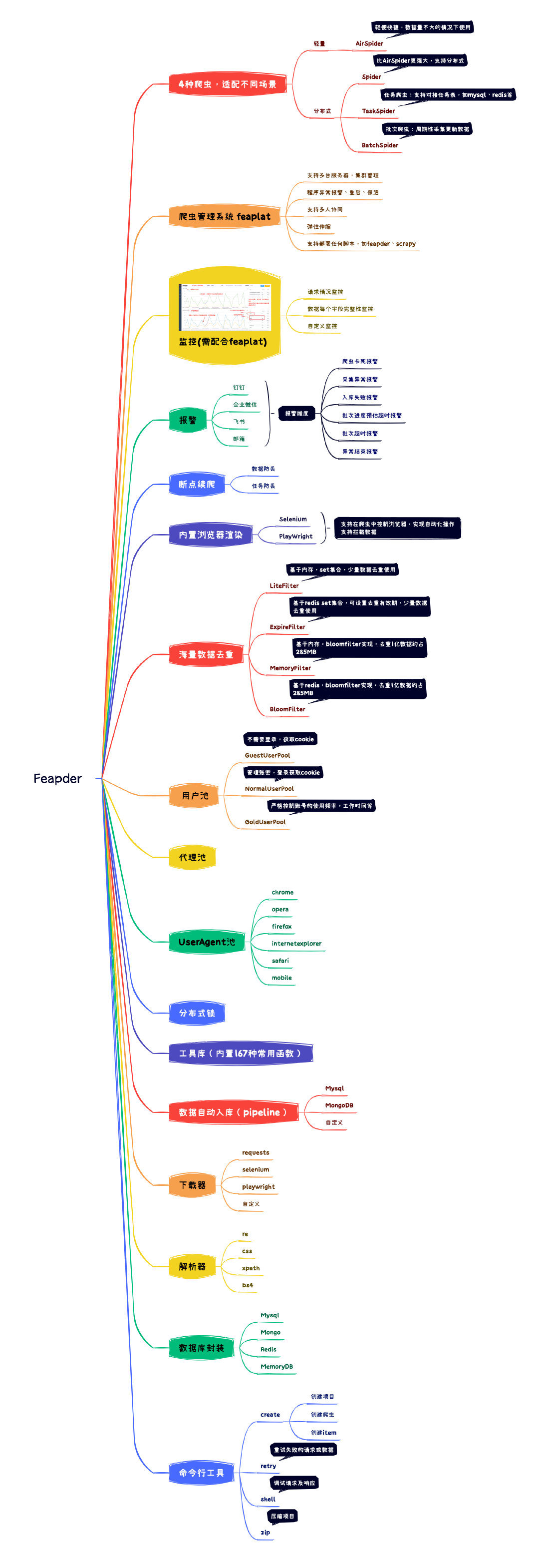

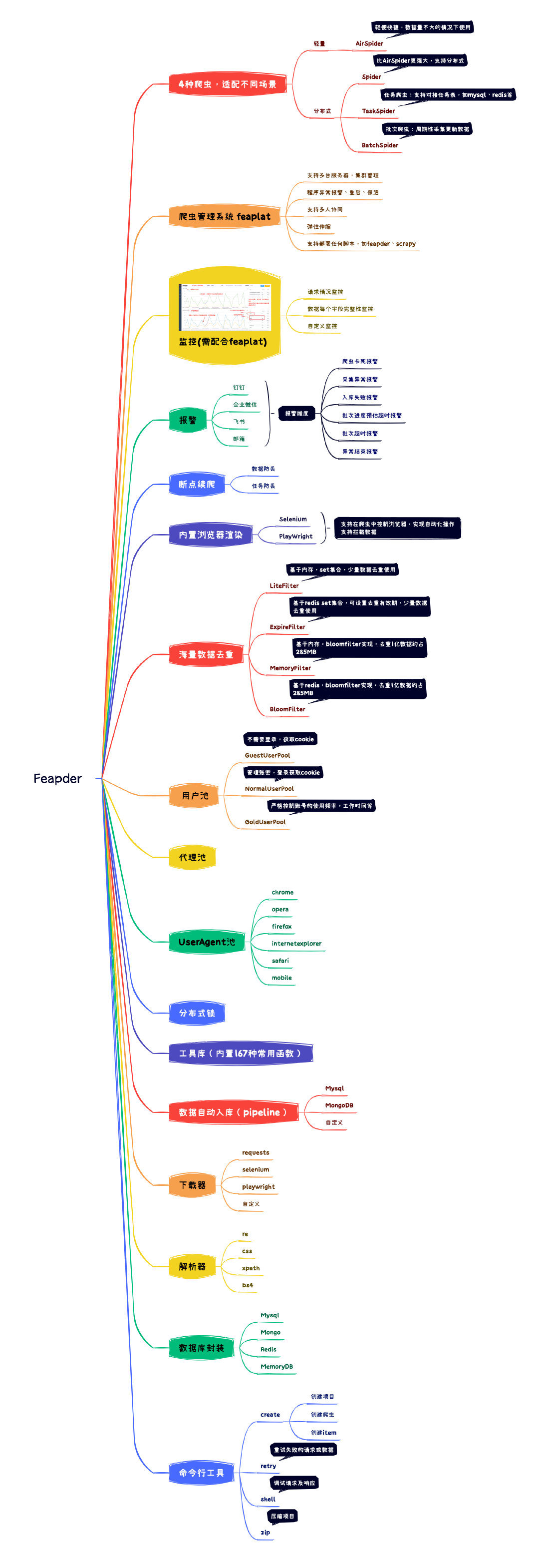

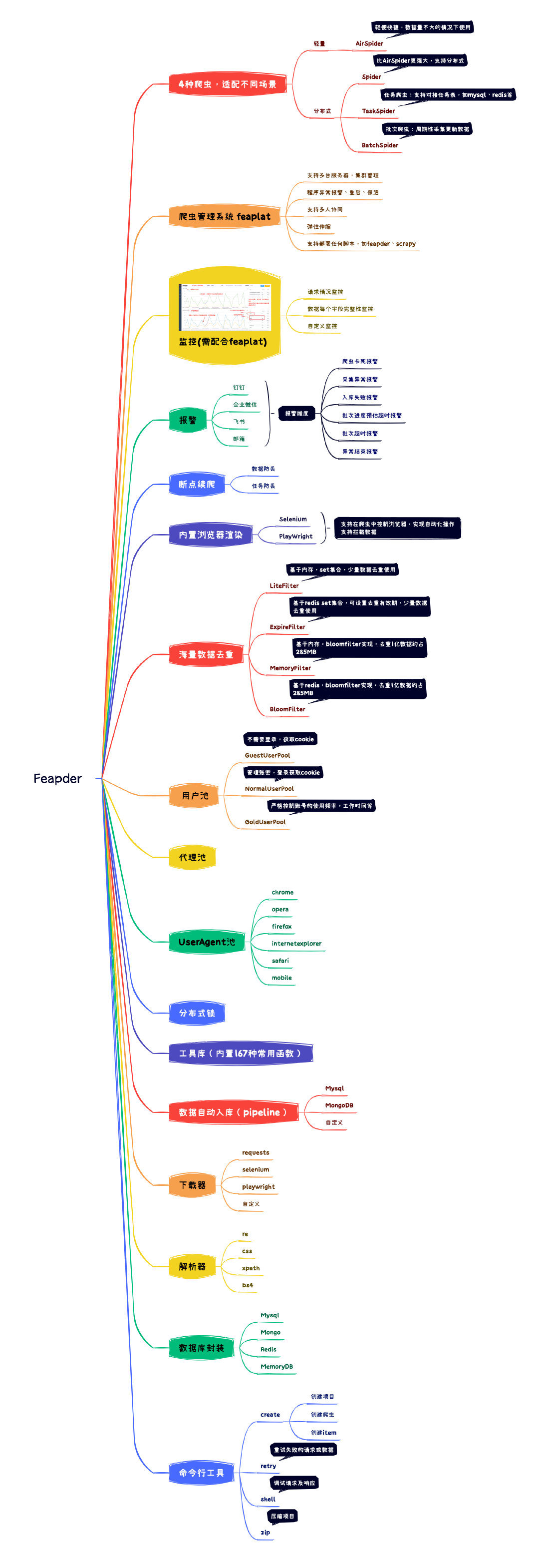

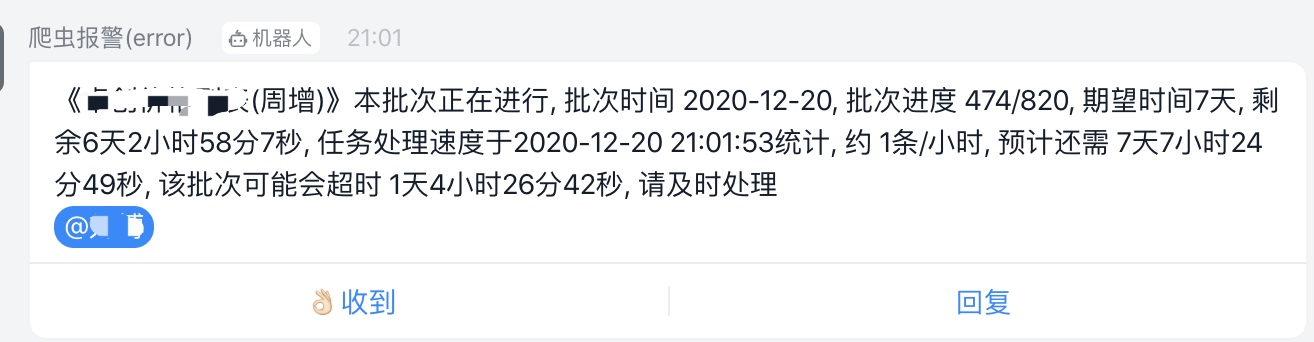

+1. feapder是一款上手简单,功能强大的Python爬虫框架,内置AirSpider、Spider、TaskSpider、BatchSpider四种爬虫解决不同场景的需求。

+2. 支持断点续爬、监控报警、浏览器渲染、海量数据去重等功能。

+3. 更有功能强大的爬虫管理系统feaplat为其提供方便的部署及调度

读音: `[ˈfiːpdə]`

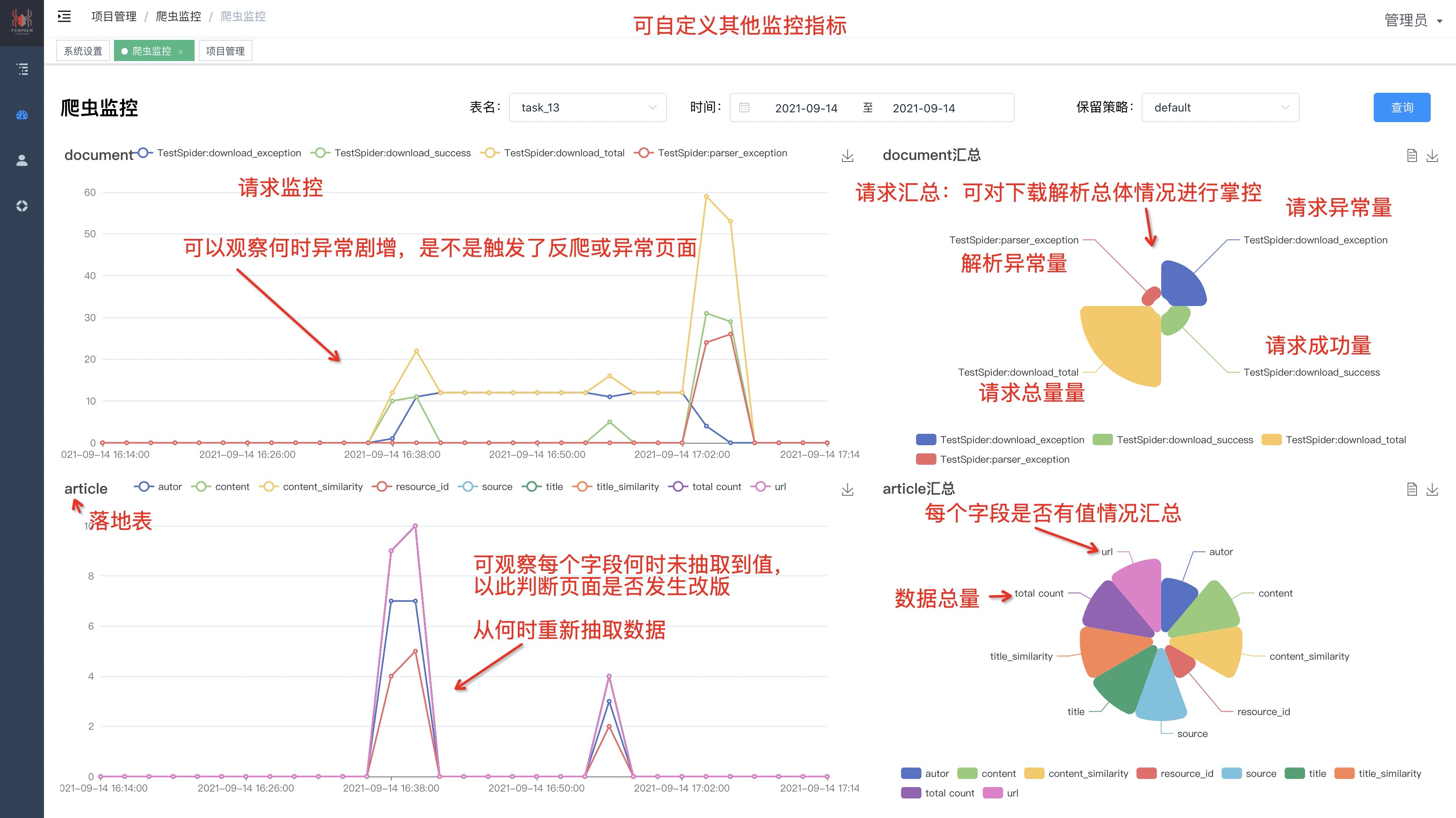

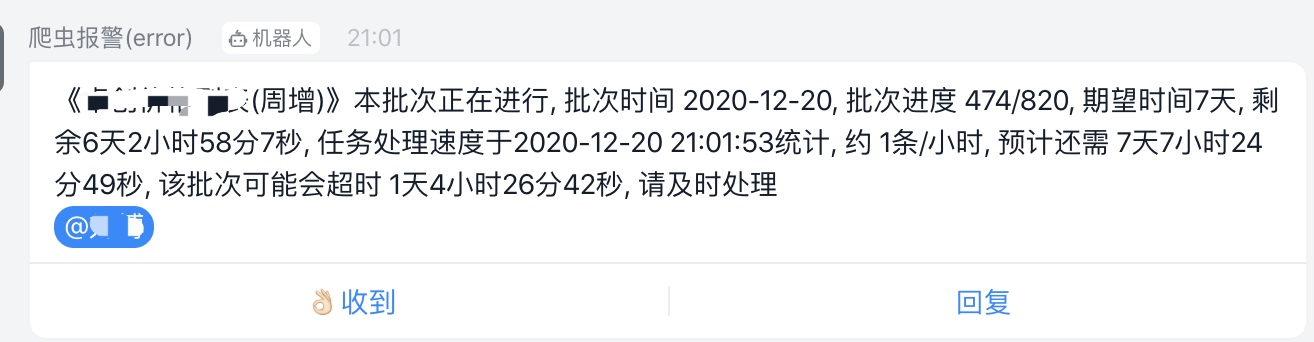

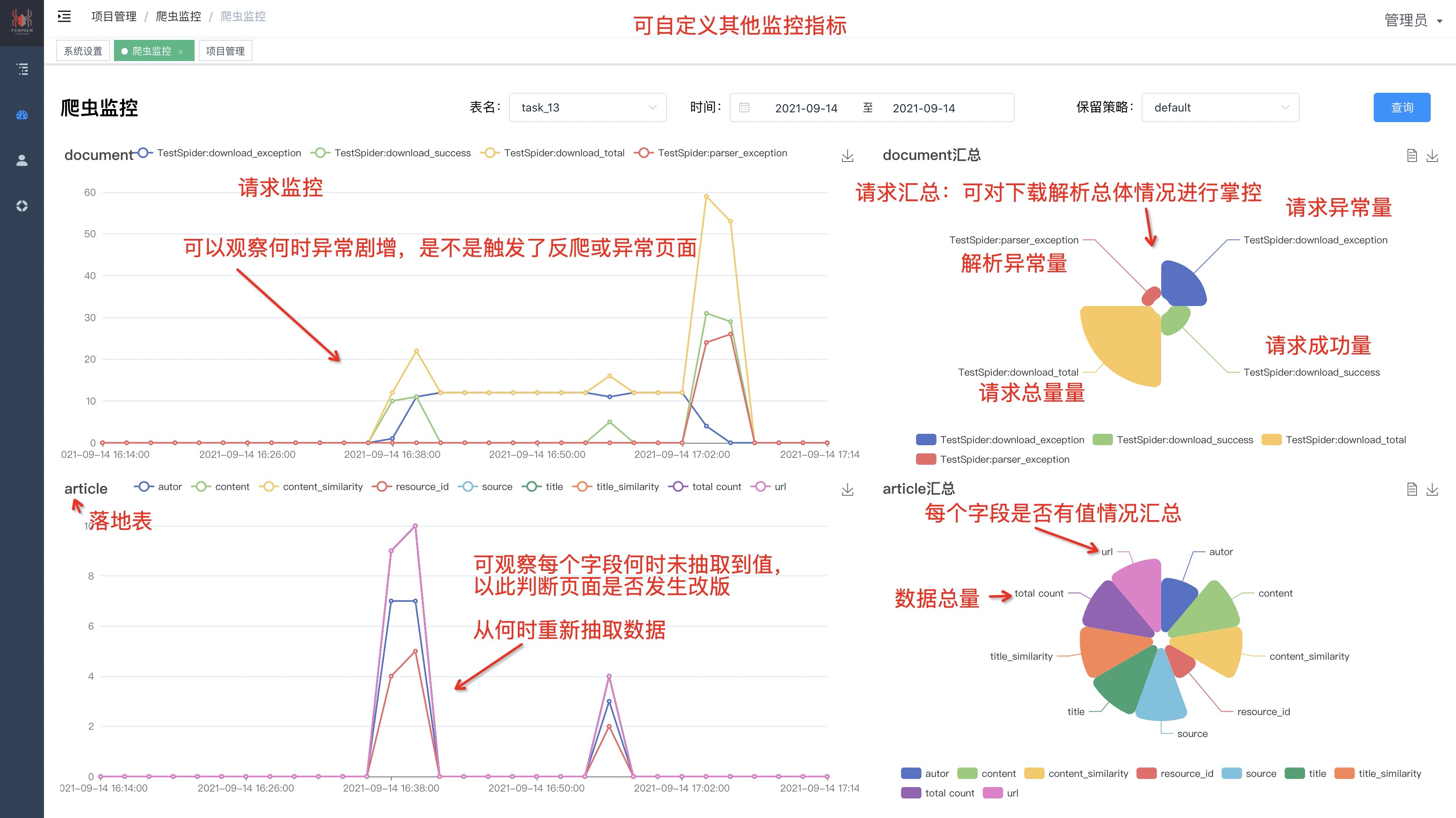

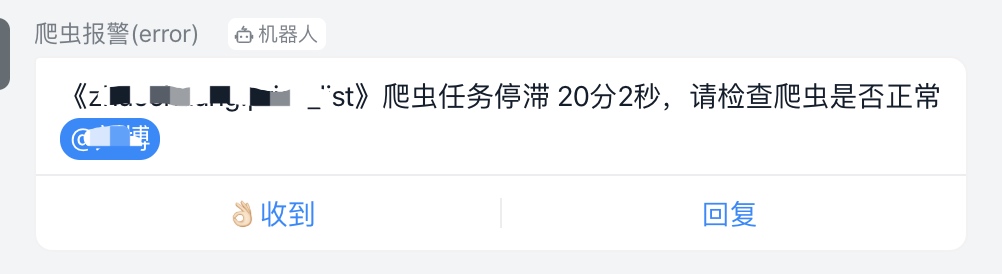

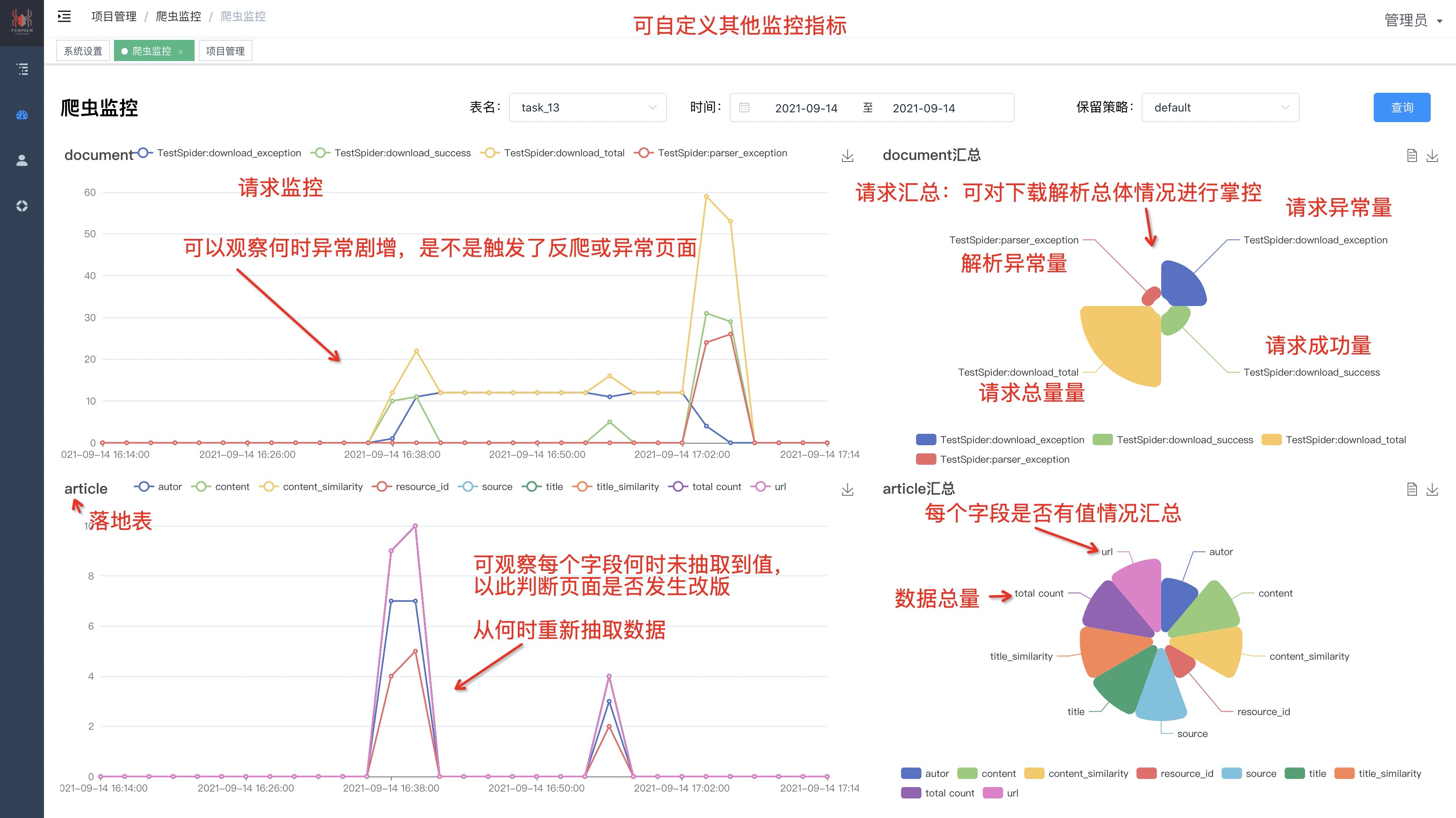

-### 1.拥有强大的监控,保障数据质量

-

-

-

-监控面板:[点击查看详情](http://feapder.com/#/feapder_platform/feaplat)

-

-### 2. 内置多维度的报警(支持 钉钉、企业微信、邮箱)

-

-

-

-

+

-### 3. 简单易用,内置三种爬虫,可应对各种需求场景

-

-- `AirSpider` 轻量爬虫:学习成本低,可快速上手

-

-- `Spider` 分布式爬虫:支持断点续爬、爬虫报警、数据自动入库等功能

-

-- `BatchSpider` 批次爬虫:可周期性的采集数据,自动将数据按照指定的采集周期划分。(如每7天全量更新一次商品销量的需求)

-

-**feapder**对外暴露的接口类似scrapy,可由scrapy快速迁移过来。支持**断点续爬**、**数据防丢**、**监控报警**、**浏览器渲染下载**、**海量数据去重**等功能

-

## 文档地址

-- 官方文档:http://feapder.com

-- 国内文档:https://boris-code.gitee.io/feapder

-- 境外文档:https://boris.org.cn/feapder

+- 官方文档:https://feapder.com

- github:https://github.com/Boris-code/feapder

- 更新日志:https://github.com/Boris-code/feapder/releases

- 爬虫管理系统:http://feapder.com/#/feapder_platform/feaplat

+

## 环境要求:

- Python 3.6.0+

@@ -59,23 +36,30 @@

From PyPi:

-通用版

+精简版

```shell

-pip3 install feapder

-```

+pip install feapder

+```

+

+浏览器渲染版:

+```shell

+pip install "feapder[render]"

+```

完整版:

```shell

-pip3 install feapder[all]

-```

+pip install "feapder[all]"

+```

-通用版与完整版区别:

+三个版本区别:

-1. 完整版支持基于内存去重

+1. 精简版:不支持浏览器渲染、不支持基于内存去重、不支持入库mongo

+2. 浏览器渲染版:不支持基于内存去重、不支持入库mongo

+3. 完整版:支持所有功能

-完整版可能会安装出错,若安装出错,请参考[安装问题](https://boris.org.cn/feapder/#/question/%E5%AE%89%E8%A3%85%E9%97%AE%E9%A2%98)

+完整版可能会安装出错,若安装出错,请参考[安装问题](docs/question/安装问题.md)

## 小试一下

@@ -88,7 +72,6 @@ feapder create -s first_spider

创建后的爬虫代码如下:

```python

-

import feapder

@@ -124,10 +107,55 @@ FirstSpider|2021-02-09 14:55:14,620|air_spider.py|run|line:80|INFO| 无任务,

1. start_requests: 生产任务

2. parse: 解析数据

+

+## 感谢以下代理赞助商

+

+### Rapidproxy代理

+

+

+

+

+

+

-

-

## 简介

-**feapder是一款上手简单,功能强大的Python爬虫框架**

+1. feapder是一款上手简单,功能强大的Python爬虫框架,内置AirSpider、Spider、TaskSpider、BatchSpider四种爬虫解决不同场景的需求。

+2. 支持断点续爬、监控报警、浏览器渲染、海量数据去重等功能。

+3. 更有功能强大的爬虫管理系统feaplat为其提供方便的部署及调度

读音: `[ˈfiːpdə]`

-### 1.拥有强大的监控,保障数据质量

-

-

-

-监控面板:[点击查看详情](http://feapder.com/#/feapder_platform/feaplat)

-

-### 2. 内置多维度的报警(支持 钉钉、企业微信、邮箱)

-

-

-

-

+

-### 3. 简单易用,内置三种爬虫,可应对各种需求场景

-

-- `AirSpider` 轻量爬虫:学习成本低,可快速上手

-

-- `Spider` 分布式爬虫:支持断点续爬、爬虫报警、数据自动入库等功能

-

-- `BatchSpider` 批次爬虫:可周期性的采集数据,自动将数据按照指定的采集周期划分。(如每7天全量更新一次商品销量的需求)

-

-**feapder**对外暴露的接口类似scrapy,可由scrapy快速迁移过来。支持**断点续爬**、**数据防丢**、**监控报警**、**浏览器渲染下载**、**海量数据去重**等功能

-

## 文档地址

-- 官方文档:http://feapder.com

-- 国内文档:https://boris-code.gitee.io/feapder

-- 境外文档:https://boris.org.cn/feapder

+- 官方文档:https://feapder.com

- github:https://github.com/Boris-code/feapder

- 更新日志:https://github.com/Boris-code/feapder/releases

- 爬虫管理系统:http://feapder.com/#/feapder_platform/feaplat

+

## 环境要求:

- Python 3.6.0+

@@ -59,23 +36,30 @@

From PyPi:

-通用版

+精简版

```shell

-pip3 install feapder

-```

+pip install feapder

+```

+

+浏览器渲染版:

+```shell

+pip install "feapder[render]"

+```

完整版:

```shell

-pip3 install feapder[all]

-```

+pip install "feapder[all]"

+```

-通用版与完整版区别:

+三个版本区别:

-1. 完整版支持基于内存去重

+1. 精简版:不支持浏览器渲染、不支持基于内存去重、不支持入库mongo

+2. 浏览器渲染版:不支持基于内存去重、不支持入库mongo

+3. 完整版:支持所有功能

-完整版可能会安装出错,若安装出错,请参考[安装问题](https://boris.org.cn/feapder/#/question/%E5%AE%89%E8%A3%85%E9%97%AE%E9%A2%98)

+完整版可能会安装出错,若安装出错,请参考[安装问题](docs/question/安装问题.md)

## 小试一下

@@ -88,7 +72,6 @@ feapder create -s first_spider

创建后的爬虫代码如下:

```python

-

import feapder

@@ -124,10 +107,55 @@ FirstSpider|2021-02-09 14:55:14,620|air_spider.py|run|line:80|INFO| 无任务,

1. start_requests: 生产任务

2. parse: 解析数据

+

+## 感谢以下代理赞助商

+

+### Rapidproxy代理

+

+

+

+

+

+ +

+

+

+### SWIFTPROXY

+

+

+

+

+

+

+

+

+

+### SWIFTPROXY

+

+

+

+

+

+ +

+

+

+### NovProxy

+

+

+

+

+

+

+

+

+

+### NovProxy

+

+

+

+

+

+ +

+

+

+

+## 参与贡献

+

+贡献之前请先阅读 [贡献指南](./CONTRIBUTING.md)

+

+感谢所有做过贡献的人!

+

+

+

+

+

+

+

+## 参与贡献

+

+贡献之前请先阅读 [贡献指南](./CONTRIBUTING.md)

+

+感谢所有做过贡献的人!

+

+

+  +

+

## 爬虫工具推荐

1. 爬虫在线工具库:http://www.spidertools.cn

-2. 验证码识别库:https://github.com/sml2h3/ddddocr

+2. 爬虫管理系统:http://feapder.com/#/feapder_platform/feaplat

+3. 验证码识别库:https://github.com/sml2h3/ddddocr

## 微信赞赏

@@ -144,14 +172,16 @@ FirstSpider|2021-02-09 14:55:14,620|air_spider.py|run|line:80|INFO| 无任务,

+

+

## 爬虫工具推荐

1. 爬虫在线工具库:http://www.spidertools.cn

-2. 验证码识别库:https://github.com/sml2h3/ddddocr

+2. 爬虫管理系统:http://feapder.com/#/feapder_platform/feaplat

+3. 验证码识别库:https://github.com/sml2h3/ddddocr

## 微信赞赏

@@ -144,14 +172,16 @@ FirstSpider|2021-02-09 14:55:14,620|air_spider.py|run|line:80|INFO| 无任务,

| 知识星球:17321694 |

作者微信: boris_tm |

- QQ群号:750614606 |

+ QQ群号:521494615 |

|

|

-  |

+  |

-

- 加好友备注:feapder

\ No newline at end of file

+

+

+

+ 加好友备注:feapder

diff --git a/docs/README.md b/docs/README.md

index 1e16f601..08ccb6aa 100644

--- a/docs/README.md

+++ b/docs/README.md

@@ -10,37 +10,17 @@

## 简介

-**feapder是一款上手简单,功能强大的Python爬虫框架**

+1. feapder是一款上手简单,功能强大的Python爬虫框架,内置AirSpider、Spider、TaskSpider、BatchSpider四种爬虫解决不同场景的需求。

+2. 支持断点续爬、监控报警、浏览器渲染、海量数据去重等功能。

+3. 更有功能强大的爬虫管理系统feaplat为其提供方便的部署及调度

读音: `[ˈfiːpdə]`

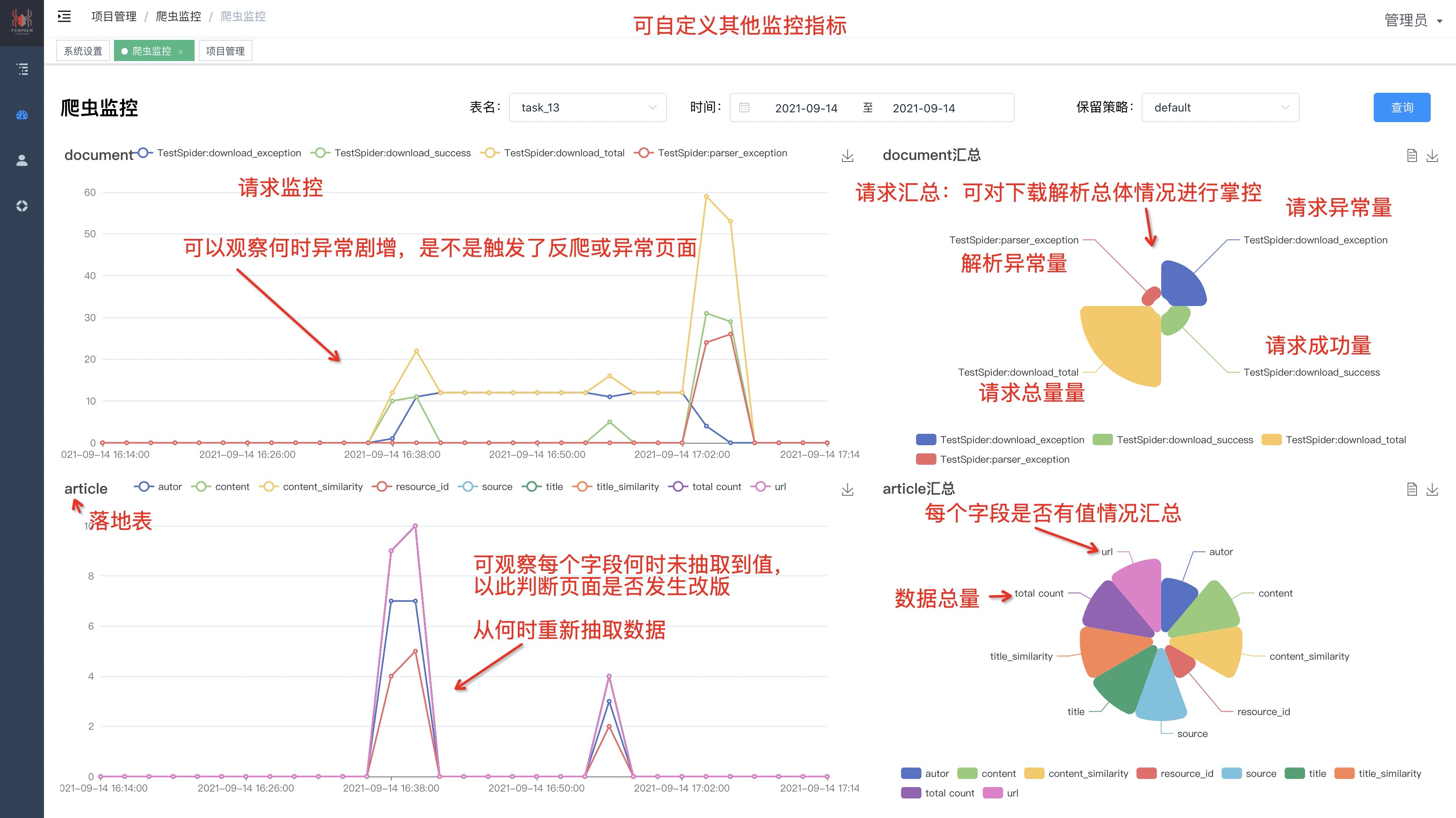

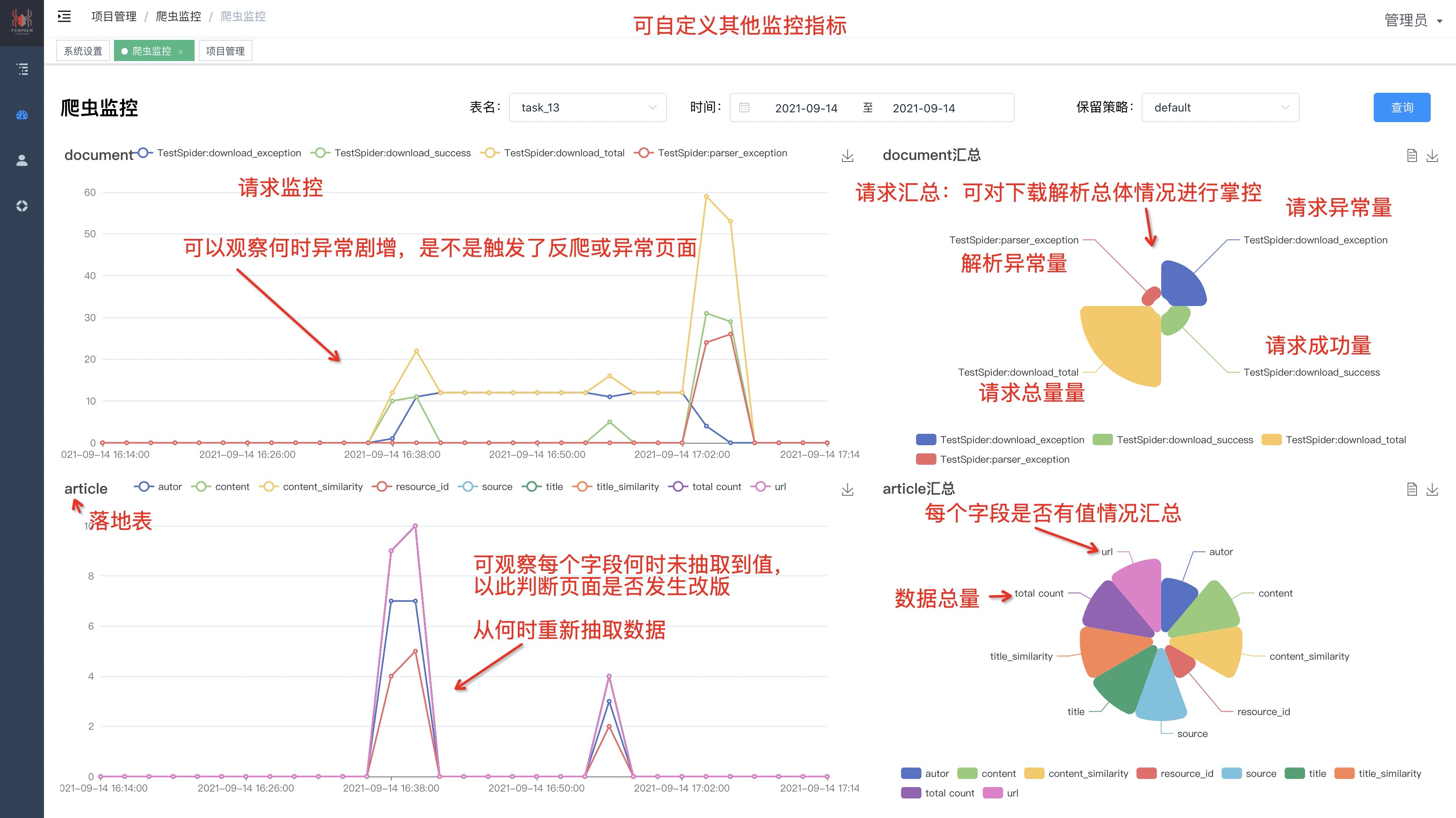

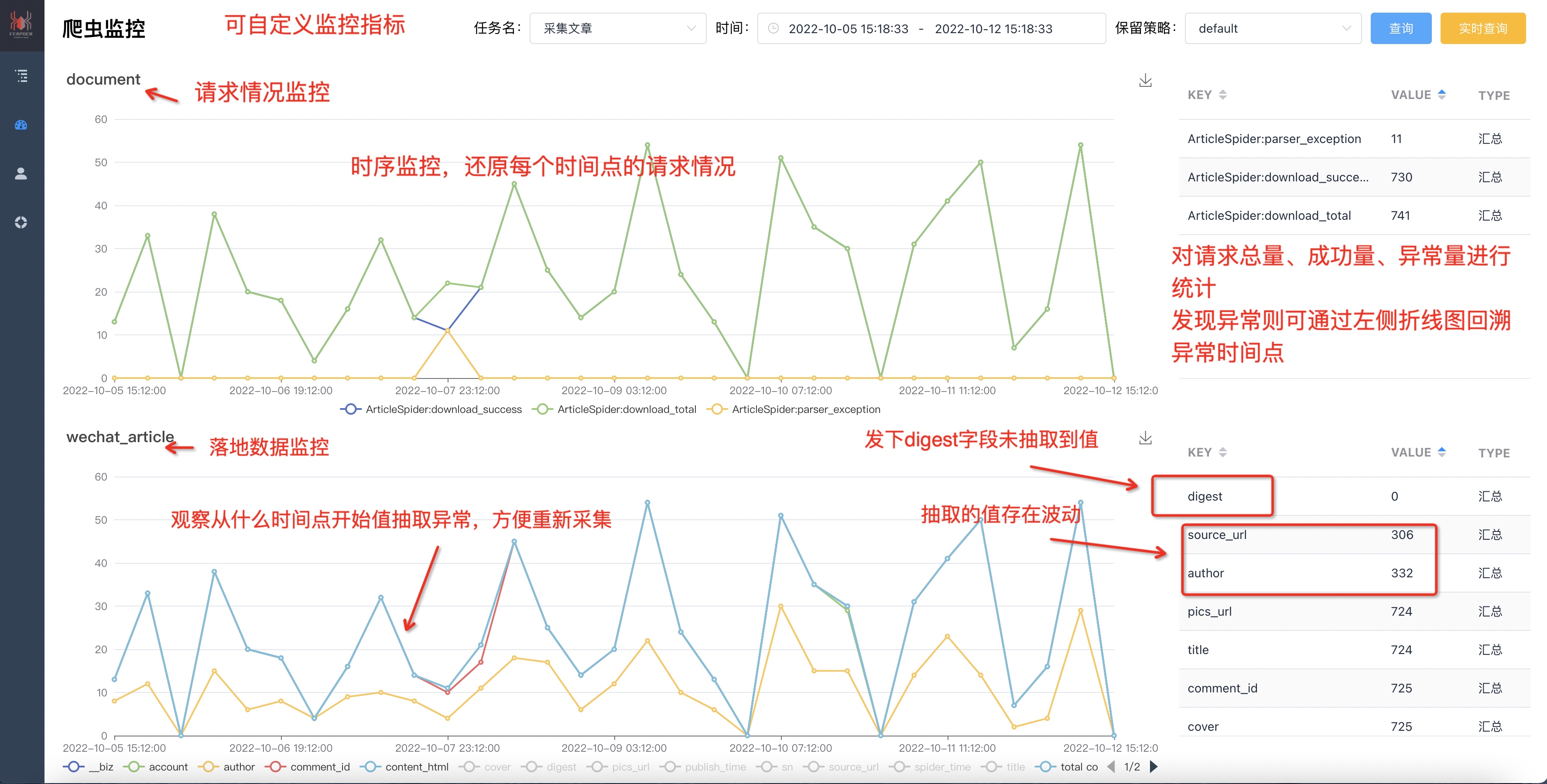

-### 1.拥有强大的监控,保障数据质量

-

-

-

-监控面板:[点击查看详情](http://feapder.com/#/feapder_platform/feaplat)

-

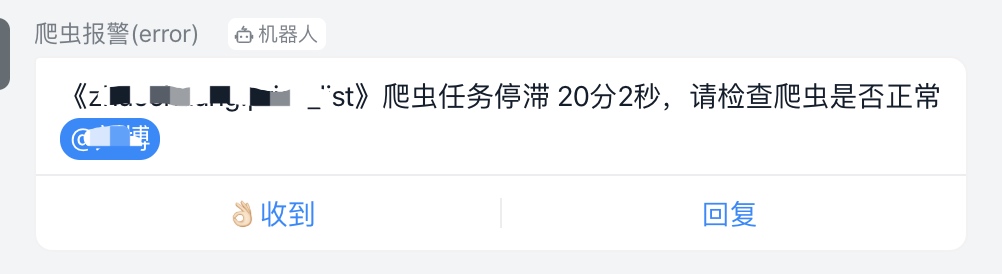

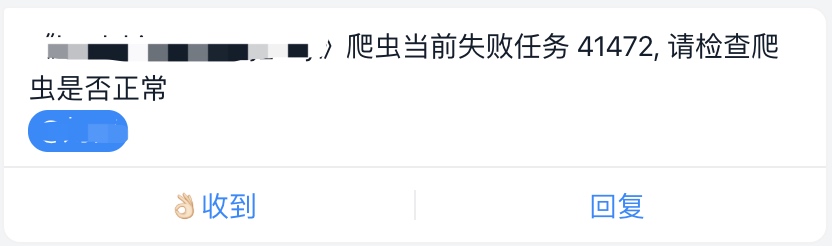

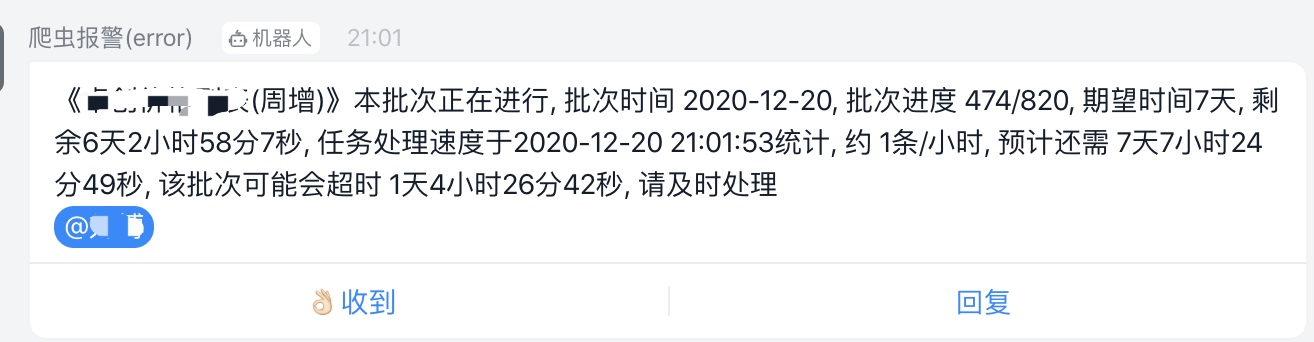

-### 2. 内置多维度的报警(支持 钉钉、企业微信、邮箱)

-

-

-

-

-

-### 3. 简单易用,内置三种爬虫,可应对各种需求场景

-

-- `AirSpider` 轻量爬虫:学习成本低,可快速上手

-

-- `Spider` 分布式爬虫:支持断点续爬、爬虫报警、数据自动入库等功能

-

-- `BatchSpider` 批次爬虫:可周期性的采集数据,自动将数据按照指定的采集周期划分。(如每7天全量更新一次商品销量的需求)

-

-**feapder**对外暴露的接口类似scrapy,可由scrapy快速迁移过来。支持**断点续爬**、**数据防丢**、**监控报警**、**浏览器渲染下载**、**海量数据去重**等功能

+

## 文档地址

-- 官方文档:http://feapder.com

-- 国内文档:https://boris-code.gitee.io/feapder

-- 境外文档:https://boris.org.cn/feapder

+- 官方文档:https://feapder.com

- github:https://github.com/Boris-code/feapder

- 更新日志:https://github.com/Boris-code/feapder/releases

- 爬虫管理系统:http://feapder.com/#/feapder_platform/feaplat

@@ -55,21 +35,29 @@

From PyPi:

-通用版

+精简版

```shell

-pip3 install feapder

-```

+pip install feapder

+```

+

+浏览器渲染版:

+```shell

+pip install "feapder[render]"

+```

完整版:

```shell

-pip3 install feapder[all]

-```

+pip install "feapder[all]"

+```

-通用版与完整版区别:

+三个版本区别:

+

+1. 精简版:不支持浏览器渲染、不支持基于内存去重、不支持入库mongo

+2. 浏览器渲染版:不支持基于内存去重、不支持入库mongo

+3. 完整版:支持所有功能

-1. 完整版支持基于内存去重

完整版可能会安装出错,若安装出错,请参考[安装问题](question/安装问题)

@@ -98,7 +86,7 @@ class FirstSpider(feapder.AirSpider):

if __name__ == "__main__":

FirstSpider().start()

-

+

```

直接运行,打印如下:

@@ -123,32 +111,34 @@ FirstSpider|2021-02-09 14:55:14,620|air_spider.py|run|line:80|INFO| 无任务,

## 爬虫工具推荐

1. 爬虫在线工具库:http://www.spidertools.cn

-2. 验证码识别库:https://github.com/sml2h3/ddddocr

+2. 爬虫管理系统:http://feapder.com/#/feapder_platform/feaplat

+3. 验证码识别库:https://github.com/sml2h3/ddddocr

-## 微信赞赏

+

## 学习交流

-

-

- | 知识星球:17321694 |

- 作者微信: boris_tm |

- QQ群号:750614606 |

-

-

+

+

+ | 知识星球:17321694 |

+ 作者微信: boris_tm |

+ QQ群号:521494615 |

+

+

-

- |

-  |

-  |

-

-

-

+

+  |

+  |

+

+

+

+

加好友备注:feapder

\ No newline at end of file

diff --git a/docs/_sidebar.md b/docs/_sidebar.md

index c8f98d37..bef51b37 100644

--- a/docs/_sidebar.md

+++ b/docs/_sidebar.md

@@ -11,6 +11,7 @@

* [使用前必读](usage/使用前必读.md)

* [轻量爬虫-AirSpider](usage/AirSpider.md)

* [分布式爬虫-Spider](usage/Spider.md)

+ * [任务爬虫-TaskSpider](usage/TaskSpider.md)

* [批次爬虫-BatchSpider](usage/BatchSpider.md)

* [爬虫集成](usage/爬虫集成.md)

@@ -19,7 +20,8 @@

* [响应-Response](source_code/Response.md)

* [代理使用说明](source_code/proxy.md)

* [用户池说明](source_code/UserPool.md)

- * [浏览器渲染](source_code/浏览器渲染.md)

+ * [浏览器渲染-Selenium](source_code/浏览器渲染-Selenium.md)

+ * [浏览器渲染-Playwright](source_code/浏览器渲染-Playwright)

* [解析器-BaseParser](source_code/BaseParser.md)

* [批次解析器-BatchParser](source_code/BatchParser.md)

* [Spider进阶](source_code/Spider进阶.md)

@@ -36,6 +38,7 @@

* [海量数据去重-dedup](source_code/dedup.md)

* [报警及监控](source_code/报警及监控.md)

* [监控打点](source_code/监控打点.md)

+ * [自定义下载器](source_code/custom_downloader.md)

* 爬虫管理系统

* [简介及部署](feapder_platform/feaplat.md)

@@ -45,4 +48,5 @@

* 常见问题

* [安装问题](question/安装问题.md)

* [运行问题](question/运行问题.md)

- * [请求问题](question/请求问题.md)

\ No newline at end of file

+ * [请求问题](question/请求问题.md)

+ * [setting不生效问题](question/setting不生效问题.md)

\ No newline at end of file

diff --git a/docs/command/cmdline.md b/docs/command/cmdline.md

index 91aadd81..74691832 100644

--- a/docs/command/cmdline.md

+++ b/docs/command/cmdline.md

@@ -24,43 +24,39 @@

Available commands:

create create project、feapder、item and so on

shell debug response

+ zip zip project

Use "feapder -h" to see more info about a command

-可见feapder支持`create`及`shell`两种命令

+可见feapder支持`create`、`shell`及`zip`三种命令

## 2. feapder create

使用feapder create 可快速创建项目、爬虫、item等,具体支持的命令可输入`feapder create -h` 查看使用帮助

> feapder create -h

- usage: feapder [-h] [-p] [-s [...]] [-i [...]] [-t] [-init] [-j] [-sj]

- [--host] [--port] [--username] [--password] [--db]

+ usage: cmdline.py [-h] [-p] [-s] [-i] [-t] [-init] [-j] [-sj] [-c] [--params] [--setting] [--host] [--port] [--username] [--password] [--db]

生成器

-

+

optional arguments:

- -h, --help show this help message and exit

- -p , --project 创建项目 如 feapder create -p

- -s [ ...], --spider [ ...]

- 创建爬虫 如 feapder create -s

- spider_type=1 AirSpider; spider_type=2 Spider;

- spider_type=3 BatchSpider;

- -i [ ...], --item [ ...]

- 创建item 如 feapder create -i test 则生成test表对应的item。

- 支持like语法模糊匹配所要生产的表。 若想生成支持字典方式赋值的item,则create -item

- test 1

- -t , --table 根据json创建表 如 feapder create -t

- -init 创建__init__.py 如 feapder create -init

- -j, --json 创建json

- -sj, --sort_json 创建有序json

- --setting 创建全局配置文件 feapder create -setting

- --host mysql 连接地址

- --port mysql 端口

- --username mysql 用户名

- --password mysql 密码

- --db mysql 数据库名

+ -h, --help show this help message and exit

+ -p , --project 创建项目 如 feapder create -p

+ -s , --spider 创建爬虫 如 feapder create -s

+ -i , --item 创建item 如 feapder create -i 支持模糊匹配 如 feapder create -i %table_name%

+ -t , --table 根据json创建表 如 feapder create -t

+ -init 创建__init__.py 如 feapder create -init

+ -j, --json 创建json

+ -sj, --sort_json 创建有序json

+ -c, --cookies 创建cookie

+ --params 解析地址中的参数

+ --setting 创建全局配置文件feapder create --setting

+ --host mysql 连接地址

+ --port mysql 端口

+ --username mysql 用户名

+ --password mysql 密码

+ --db mysql 数据库名

具体使用方法如下:

@@ -87,23 +83,23 @@

### 2. 创建爬虫

-爬虫分为3种,分别为 轻量级爬虫(AirSpider)、分布式爬虫(Spider)以及 批次爬虫(BatchSpider)

-

命令

- feapder create -s

-

-* AirSpider 对应的 spider_type 值为 1

-* Spider 对应的 spider_type 值为 2

-* BatchSpider 对应的 spider_type 值为 3

-* 默认 spider_type 值为 1

-

-AirSpider爬虫示例:

+ feapder create -s

+

+示例:创建名为first_spider的爬虫

- feapder create -s first_spider 1

+```shell

+feapder create -s first_spider

-

-生成first_spider.py, 内容如下:

+请选择爬虫模板

+> AirSpider

+ Spider

+ TaskSpider

+ BatchSpider

+```

+

+输入命令后,可以按上下键选择爬虫模板,如选择 AirSpider爬虫模板,生成first_spider.py, 内容如下:

import feapder

@@ -120,7 +116,7 @@ AirSpider爬虫示例:

FirstSpider().start()

-若为项目结构,建议先进入到spiders目录下,再创建爬虫

+若在项目下创建,建议先进入到spiders目录下,再创建爬虫

### 3. 创建 item

@@ -130,6 +126,16 @@ item为与数据库表的映射,与数据入库的逻辑相关。

命令

feapder create -i

+

+输出:

+

+```

+请选择Item类型

+> Item

+ Item 支持字典赋值

+ UpdateItem

+ UpdateItem 支持字典赋值

+```

示例

@@ -189,9 +195,9 @@ class SpiderDataItem(Item):

这样,以后所有的项目setting.py中均可不配置mysql连接信息

-**若item字段过多,不想逐一赋值,可通过如下方式创建**

+**若item字段过多,不想逐一赋值,可选择支持字典赋值的Item类型创建**

- feapder create -i spider_data 1

+

生成:

@@ -218,7 +224,7 @@ item = SpiderDataItem(**response_data)

```

-### 4. 创建json 或 有序json

+### 4. 创建json或有序json

此命令和快速将 `xxx:xxx` 这种字符串格式转为json格式,常用于将网页或者抓包工具抓取出来的header、cookie转为json

diff --git a/docs/feapder_platform/feaplat.md b/docs/feapder_platform/feaplat.md

index 83f028ca..405f3e0c 100644

--- a/docs/feapder_platform/feaplat.md

+++ b/docs/feapder_platform/feaplat.md

@@ -6,54 +6,61 @@

读音: `[ˈfiːplæt] `

-

+

+

## 特性

-1. 支持任何python脚本,包括不限于`feapder`、`scrapy`

-2. 支持浏览器渲染,支持有头模式。浏览器支持`playwright`、`selenium`

-3. 支持部署服务,可自动负载均衡

-4. 支持服务器集群管理

+1. 支持部署任何程序,包括不限于`feapder`、`scrapy`

+2. 支持集群管理,部署分布式爬虫可一键扩展进程数

+3. 支持部署服务,且可自动实现服务负载均衡

+4. 支持程序异常报警、重启、保活

5. 支持监控,监控内容可自定义

-6. 支持起多个实例,如分布式爬虫场景

-7. 支持弹性伸缩

-8. 支持4种定时启动方式

-9. 支持自定义worker镜像,如自定义java的运行环境、机器学习环境等,即根据自己的需求自定义(feaplat分为`master-调度端`和`worker-运行任务端`)

-10. docker一键部署,架设在docker swarm集群上

-

-

-## 为什么用feaplat爬虫管理系统

+6. 支持4种定时调度模式

+7. 自动从git仓库拉取最新的代码运行,支持指定分支

+8. 支持多人协同

+9. 支持浏览器渲染,支持有头模式。浏览器支持`playwright`、`selenium`

+10. 支持弹性伸缩

+12. 支持自定义worker镜像,如自定义java的运行环境、node运行环境等,即根据自己的需求自定义(feaplat分为`master-调度端`和`worker-运行任务端`)

+13. docker一键部署,架设在docker swarm集群上

-**市面上的爬虫管理系统**

+## 功能概览

-

+暂时不支持 苹果电脑的Apple芯片

-worker节点常驻,且运行多个任务,不能弹性伸缩,任务之前会相互影响,稳定性得不到保障

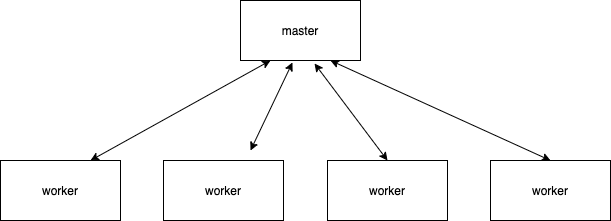

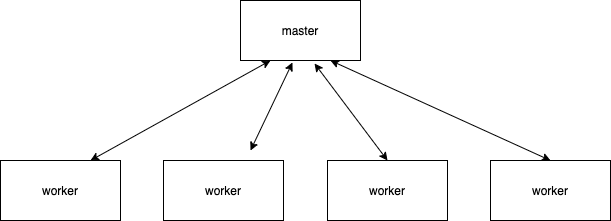

-

-**feaplat爬虫管理系统**

+### 1. 项目管理

-

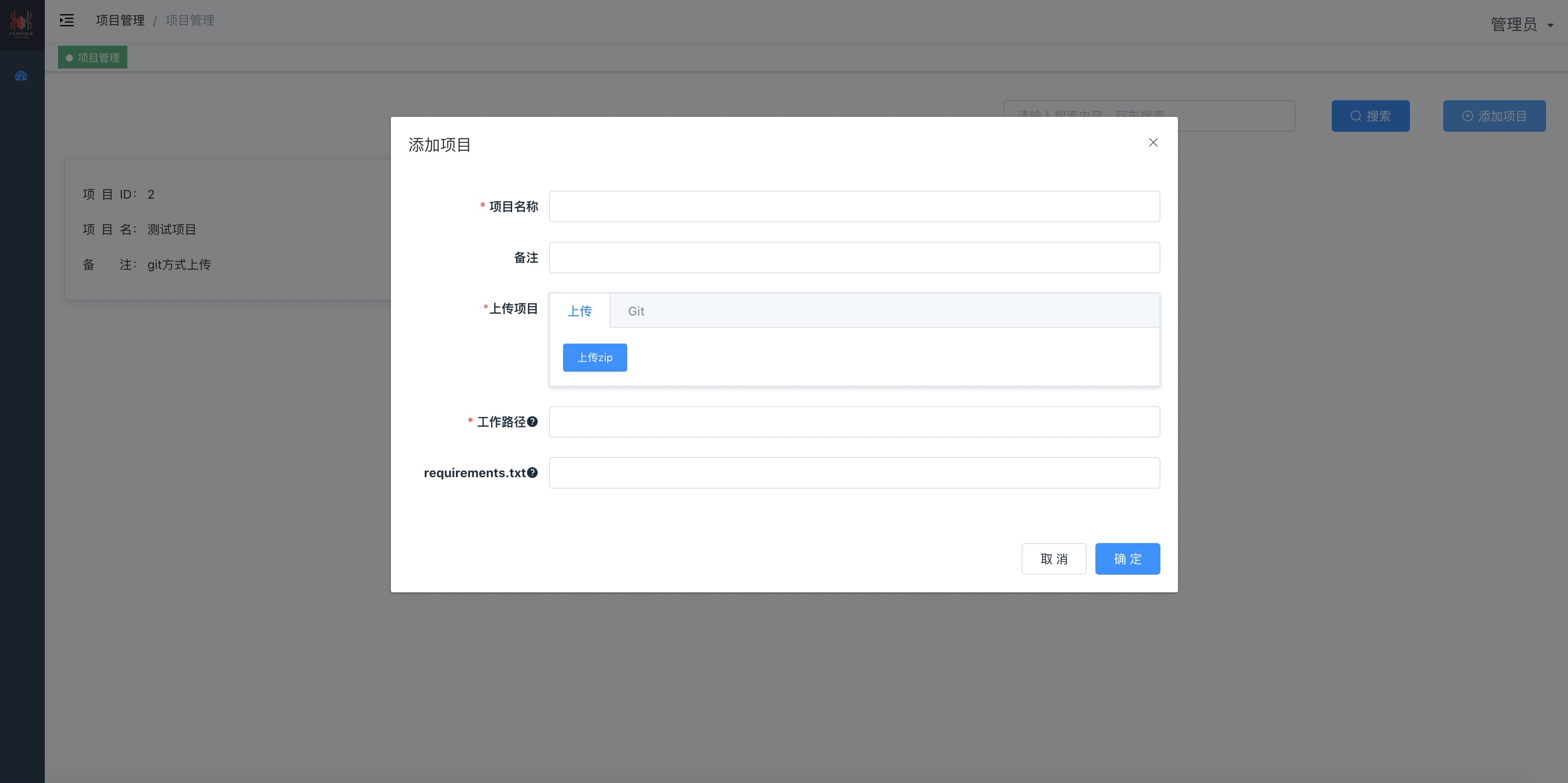

+添加/编辑项目

-worker节点根据任务动态生成,一个worker只运行一个任务实例,任务做完worker销毁,稳定性高;多个服务器间自动均衡分配,弹性伸缩

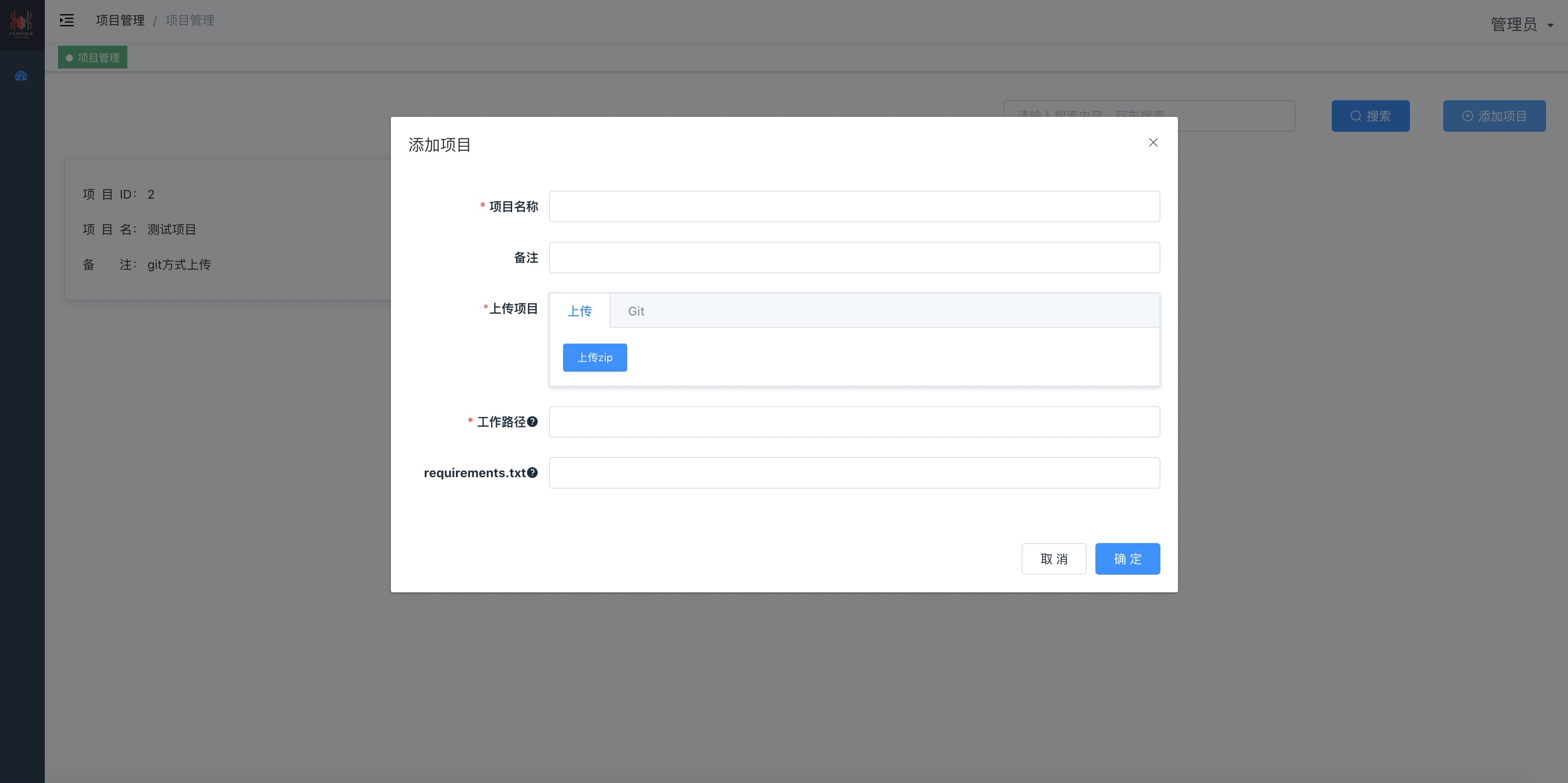

+

+- 支持 git和zip两种方式上传项目

+- 根据requirements.txt自动安装依赖包

+- 可选择多个人参与项目

-## 功能概览

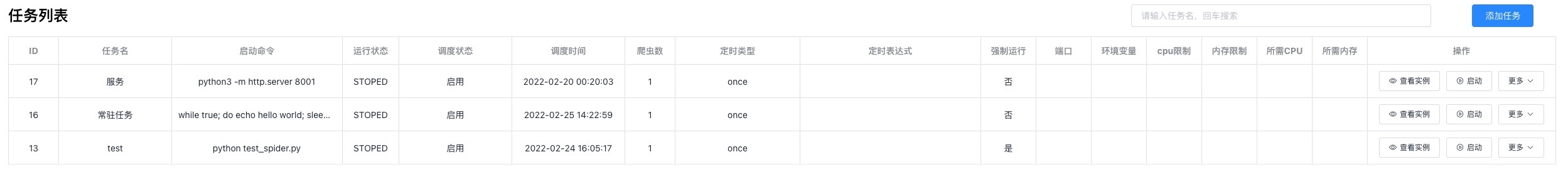

+### 2. 任务管理

-### 1. 项目管理

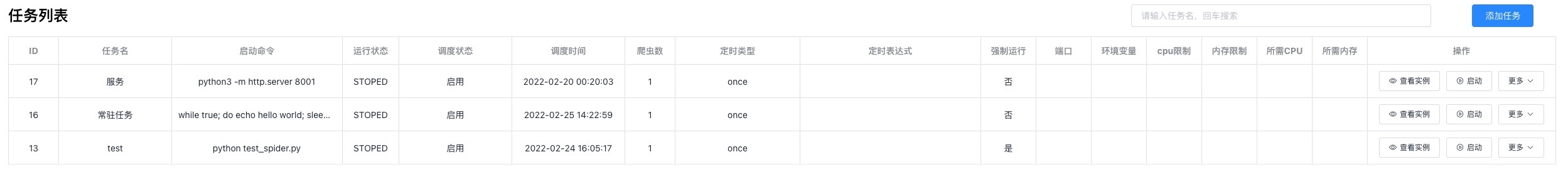

+

+

-添加/编辑项目

-

+- 支持一键启动多个任务实例(分布式爬虫场景或者需要启动多个进程的场景)

+- 支持4种调度模式

+- 标签:给任务分类使用

+- 强制运行:(上一次任务没结束,本次是否运行,是则会停止上一次任务,然后运行本次调度)

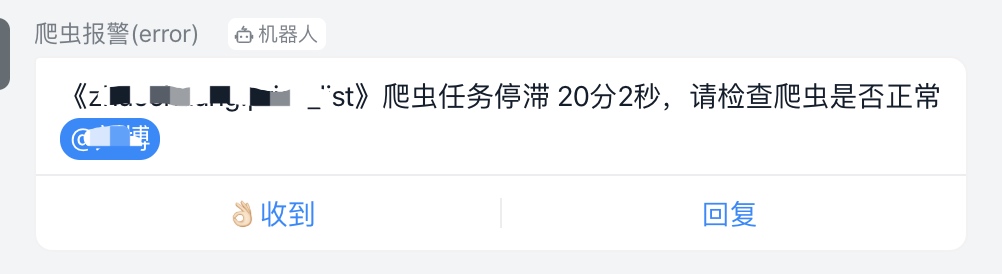

+- 异常重启:当部署的程序异常退出,是否自动重启,且会报警

+

+- 支持限制程序运行的CPU、内存等。

-### 2. 任务管理

-

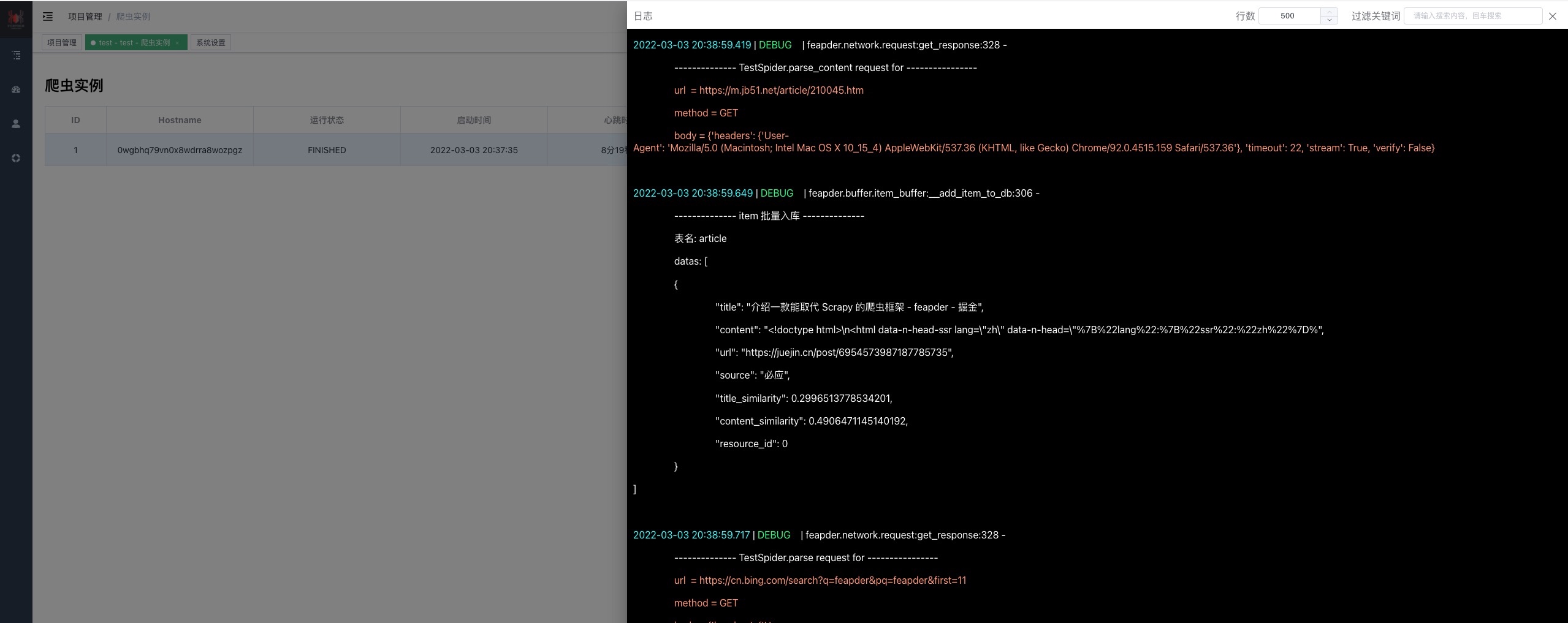

+### 3. 任务实例

+一键部署了20份程序,每个程序独占一个进程,可从列表看每个进程部署到哪台服务器上了,运行状态是什么

-### 3. 任务实例

+

-日志

-

+实时查看日志

+

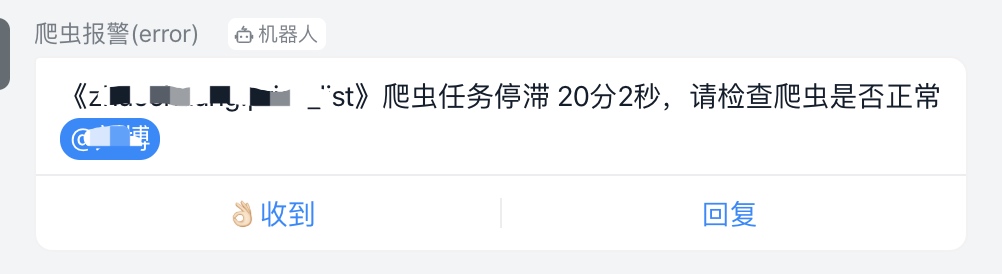

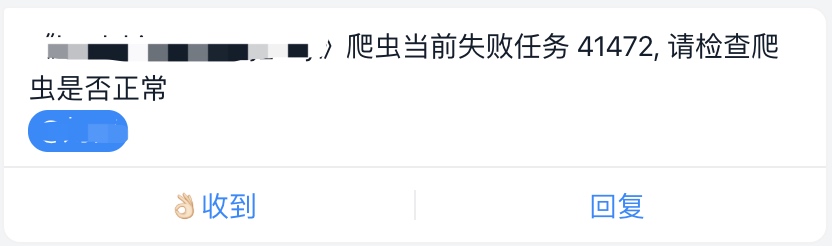

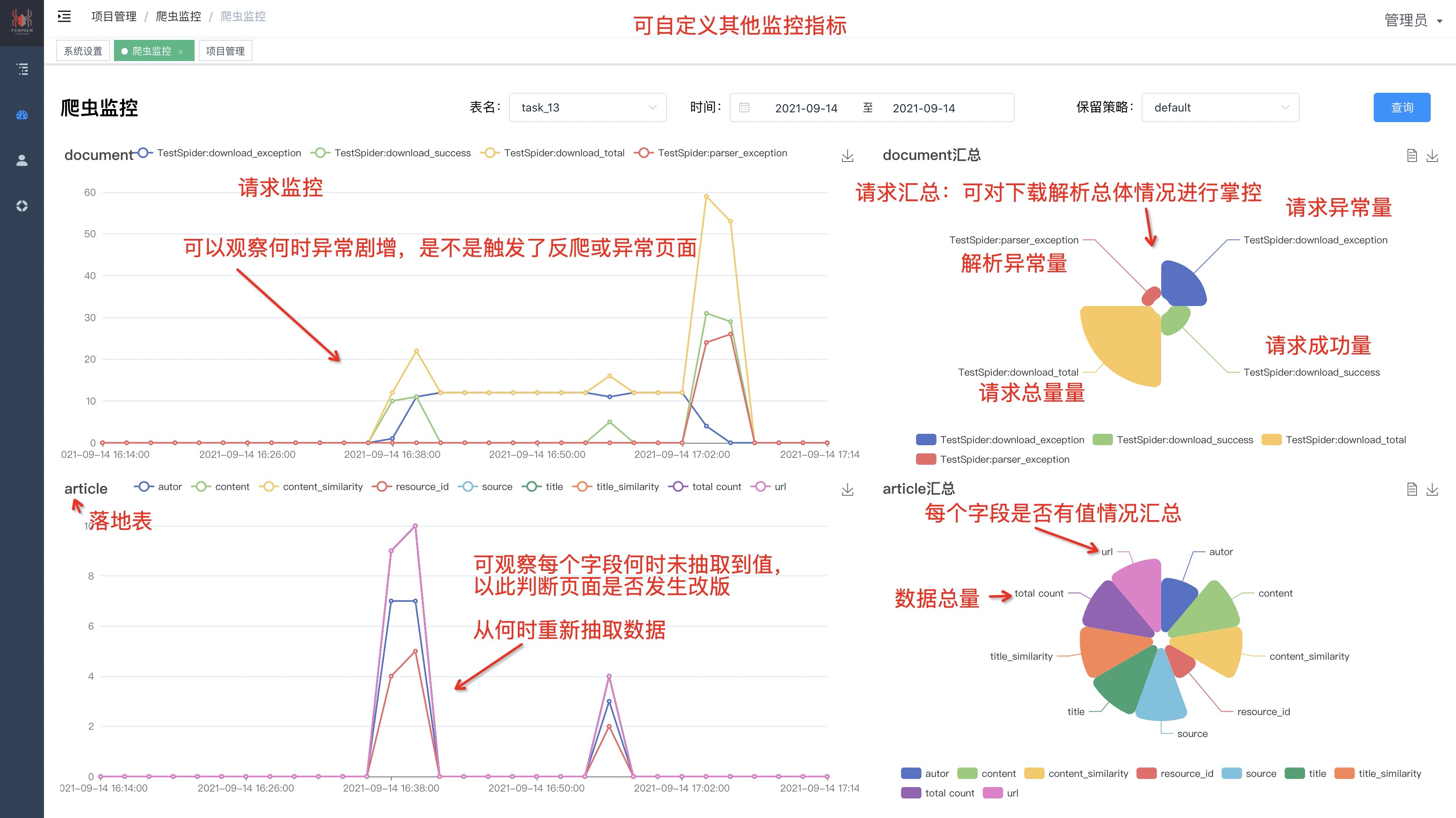

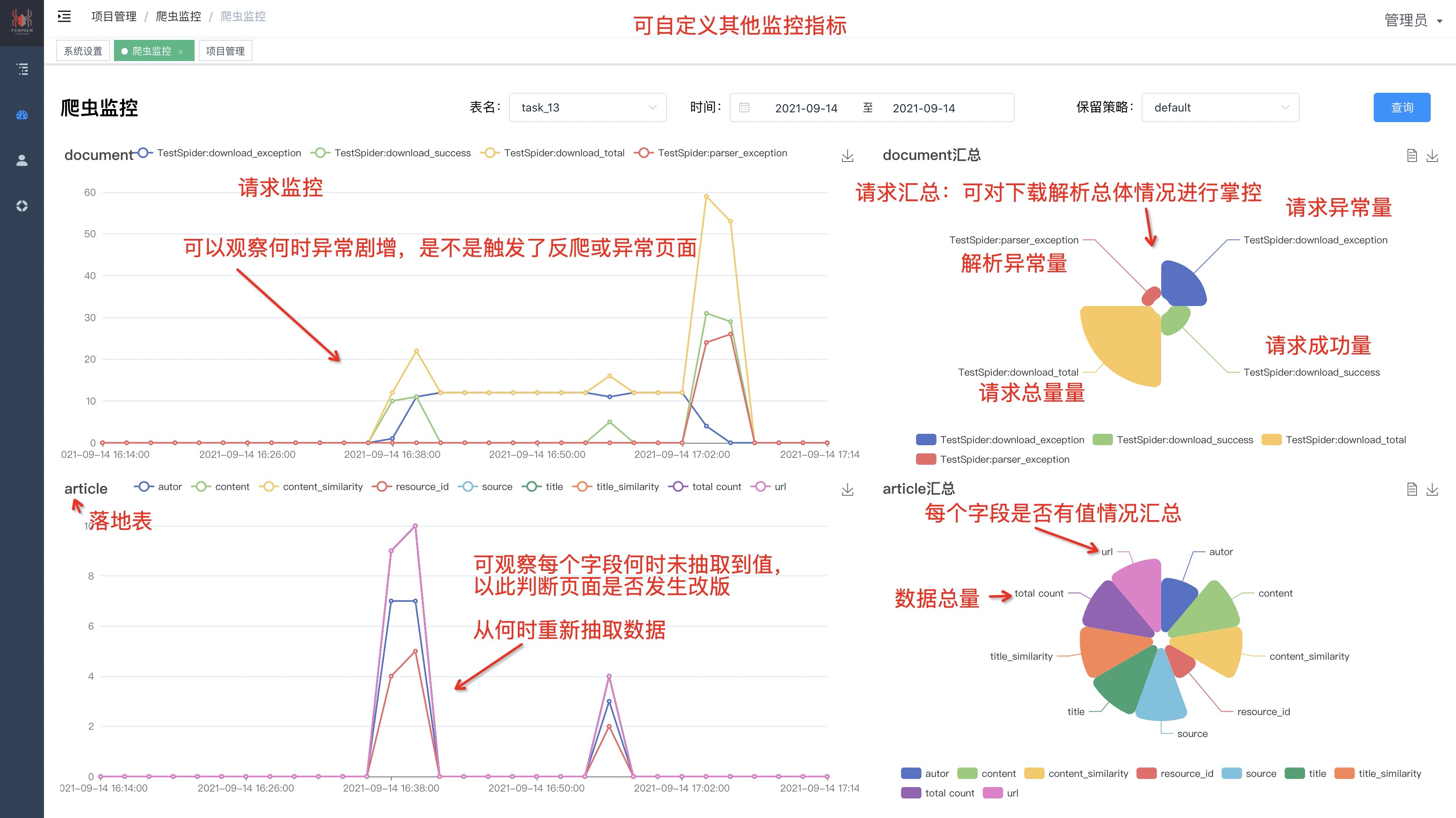

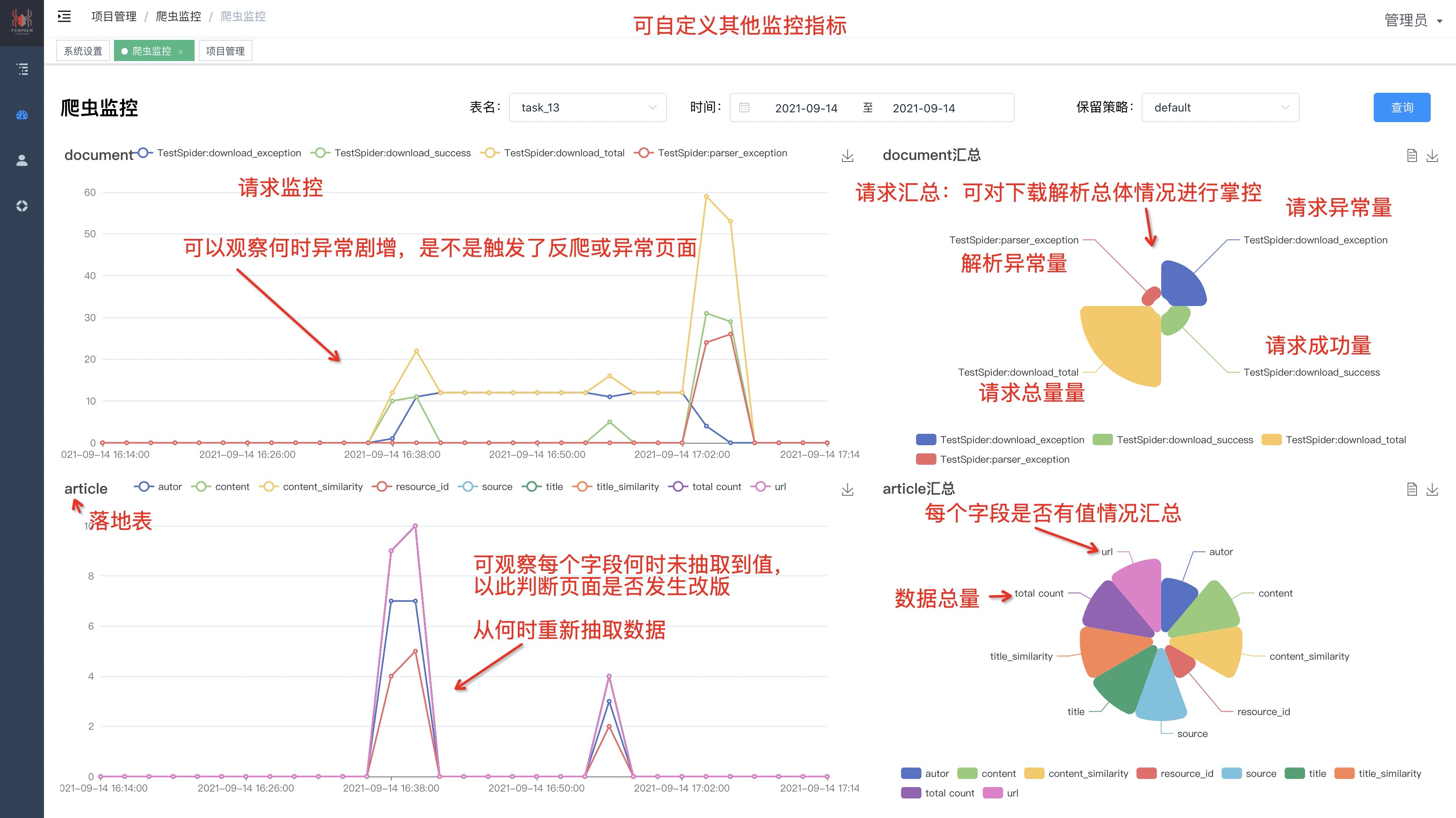

### 4. 爬虫监控

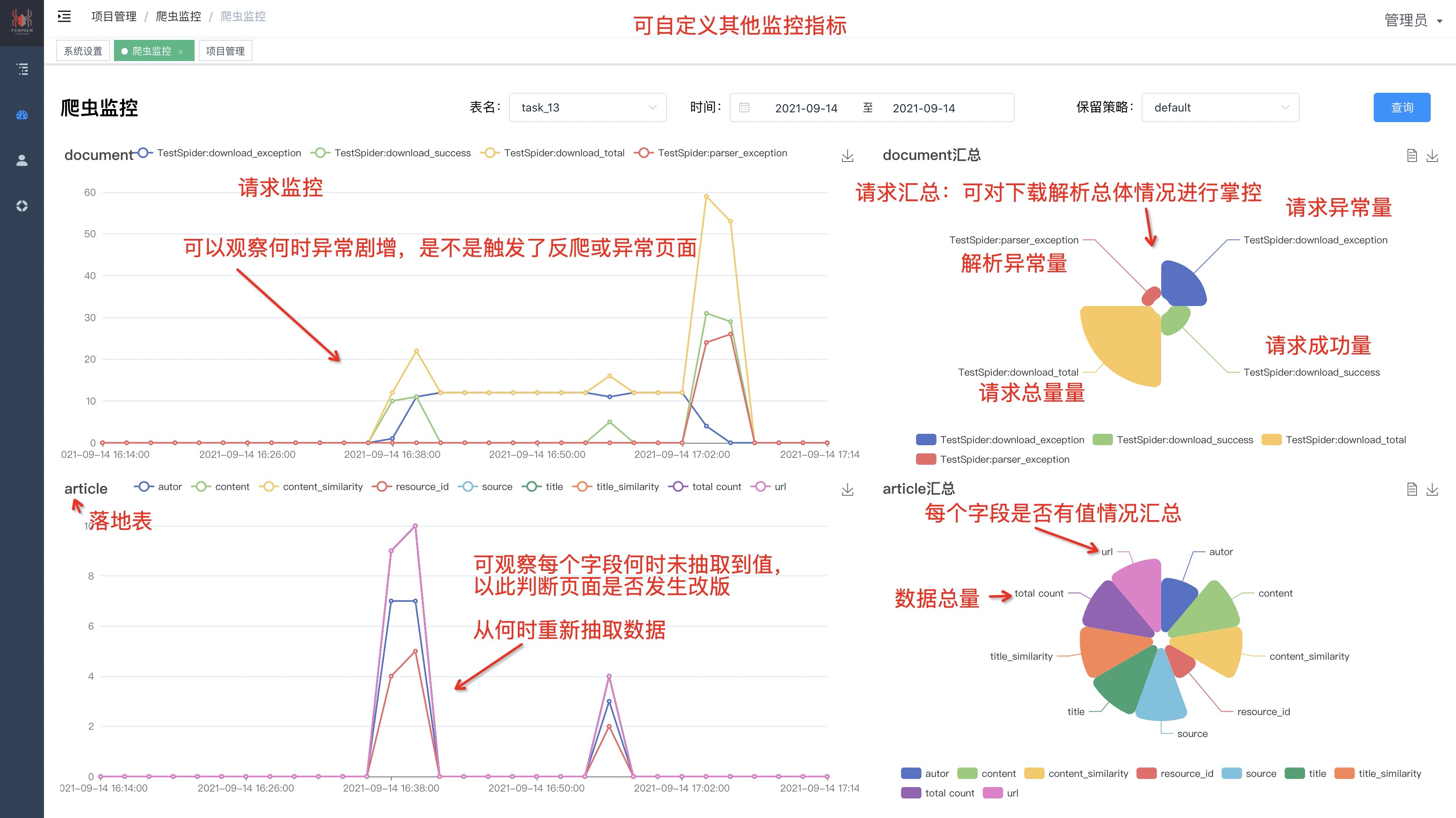

@@ -63,17 +70,43 @@ feaplat支持对feapder爬虫的运行情况进行监控,除了数据监控和

注:需 feapder>=1.6.6

-

+

+

+### 5. 报警

+调度异常、程序异常自动报警

+支持钉钉、企业微信、飞书、邮箱

+

+

+## 为什么用feaplat爬虫管理系统

+

+**稳!很稳!!相当稳!!!**

+

+### 市面上的爬虫管理系统

+

+

+

+worker节点常驻,且运行多个任务,不能弹性伸缩,任务之前会相互影响,稳定性得不到保障

+

+### feaplat爬虫管理系统

+

+

+

+worker节点根据任务动态生成,一个worker只运行一个任务实例,任务做完worker销毁,稳定性高;多个服务器间自动均衡分配,弹性伸缩

## 部署

-> 下面部署以centos为例, 其他平台docker安装方式可参考docker官方文档:https://docs.docker.com/compose/install/

+> 安装方式参考docker官方文档:https://docs.docker.com/compose/install/

### 1. 安装docker

-删除旧版本(可选,需要重装升级时执行)

+#### 1.1 centos系统

+

+> docker --version

+> 作者的docker版本为 20.10.12,低于此版本的可能会存在问题

+

+删除旧版本(可选,需要重装升级docker时执行)

```shell

yum remove docker docker-common docker-selinux docker-engine

@@ -87,12 +120,74 @@ yum install -y yum-utils device-mapper-persistent-data lvm2 && python2 /usr/bin/

```shell

yum install -y yum-utils device-mapper-persistent-data lvm2 && python2 /usr/bin/yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo && yum install docker-ce -y

```

-启动

+或者使用国内 daocloud 一键安装命令

+```

+curl -sSL https://get.daocloud.io/docker | sh

+```

+

+启动docker服务

+

```shell

systemctl enable docker

systemctl start docker

```

+验证: 打开终端,输入

+

+```shell

+docker ps

+```

+

+#### 1.2 ubuntu系统

+

+```

+sudo apt update

+sudo apt install docker.io docker-compose

+```

+

+启动docker服务

+

+```shell

+sudo systemctl enable docker

+sudo systemctl start docker

+```

+

+验证: 打开终端,输入

+

+```shell

+sudo docker ps

+```

+

+#### 1.3 window系统

+

+访问下面的链接,下载Docker Desktop, 然后安装即可

+

+https://docs.docker.com/desktop/setup/install/windows-install/

+

+

+运行安装好的Docker Desktop

+

+验证: 打开cmd终端,输入

+

+```shell

+docker ps

+```

+

+#### 1.4 mac系统

+

+访问下面的链接,下载Docker Desktop, 然后安装即可

+

+https://docs.docker.com/desktop/setup/install/mac-install/

+

+

+运行安装好的Docker Desktop

+

+验证: 打开终端,输入

+```shell

+docker ps

+```

+

+

### 2. 安装 docker swarm

docker swarm init

@@ -100,7 +195,12 @@ systemctl start docker

# 如果你的 Docker 主机有多个网卡,拥有多个 IP,必须使用 --advertise-addr 指定 IP

docker swarm init --advertise-addr 192.168.99.100

-### 3. 安装docker-compose

+### 3. 安装docker-compose(非必须)

+一般安装完docker后,会自带 docker compose。可先输入下面的命令验证是否有改环境,若有则不需要安装

+``` shell

+docker compose

+```

+若无`docker compose`命令,则按照下面的安装

```shell

sudo curl -L "https://github.com/docker/compose/releases/download/1.29.2/docker-compose-$(uname -s)-$(uname -m)" -o /usr/local/bin/docker-compose

@@ -111,6 +211,9 @@ sudo chmod +x /usr/local/bin/docker-compose

sudo curl -L "https://get.daocloud.io/docker/compose/releases/download/1.29.2/docker-compose-$(uname -s)-$(uname -m)" -o /usr/local/bin/docker-compose

sudo chmod +x /usr/local/bin/docker-compose

```

+安装后输入`docker-compose`验证是否成功

+

+注:`docker-compose` 与 `docker compose` 两种命令用法一样,是一个东西,只不过不同版本的docker可能叫法不一

### 4. 部署feaplat爬虫管理系统

#### 预备项

@@ -120,13 +223,16 @@ yum -y install git

```

#### 1. 下载项目

+> 先按照下面命令拉取develop分支代码运行。

+> master分支不支持urllib3>=2.0版本,现在已经运行不起来了,但之前老用户不受影响。待后续测试好兼容性,不影响老用户后,会将develop分支合并到master

+

gitub

```shell

-git clone https://github.com/Boris-code/feaplat.git

+git clone -b develop https://github.com/Boris-code/feaplat.git

```

gitee

```shell

-git clone https://gitee.com/Boris-code/feaplat.git

+git clone -b develop https://gitee.com/Boris-code/feaplat.git

```

#### 2. 运行

@@ -135,6 +241,8 @@ git clone https://gitee.com/Boris-code/feaplat.git

```shell

cd feaplat

+docker compose up -d

+或者

docker-compose up -d

```

@@ -170,13 +278,26 @@ docker-compose stop

docker swarm join-token worker

```

+输出举例如下

+

+```shell

+docker swarm join --token SWMTKN-1-1mix1x7noormwig1pjqzmrvgnw2m8zxqdzctqa8t3o8s25fjgg-9ot0h1gatxfh0qrxiee38xxxx 172.17.5.110:2377

+```

+

**在需扩充的服务器上执行**

```shell

docker swarm join --token [token] [ip]

```

-这条命令用于将该台服务器加入集群节点

+若服务器彼此之间不是内网,为公网环境,则需要将ip改成公网,且开放端口2377

+

+开启并检查2377端口

+```shell

+firewall-cmd --zone=public --add-port=2377/tcp --permanent

+firewall-cmd --reload

+firewall-cmd --query-port=2377/tcp

+```

#### 3. 验证是否成功

@@ -196,55 +317,93 @@ docker node ls

docker swarm leave

```

-## 拉取私有项目

+## 使用

-拉取私有项目需在git仓库里添加如下公钥

-

-```

-ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQCd/k/tjbcMislEunjtYQNXxz5tgEDc/fSvuLHBNUX4PtfmMQ07TuUX2XJIIzLRPaqv3nsMn3+QZrV0xQd545FG1Cq83JJB98ATTW7k5Q0eaWXkvThdFeG5+n85KeVV2W4BpdHHNZ5h9RxBUmVZPpAZacdC6OUSBYTyCblPfX9DvjOk+KfwAZVwpJSkv4YduwoR3DNfXrmK5P+wrYW9z/VHUf0hcfWEnsrrHktCKgohZn9Fe8uS3B5wTNd9GgVrLGRk85ag+CChoqg80DjgFt/IhzMCArqwLyMn7rGG4Iu2Ie0TcdMc0TlRxoBhqrfKkN83cfQ3gDf41tZwp67uM9ZN feapder@qq.com

-```

-

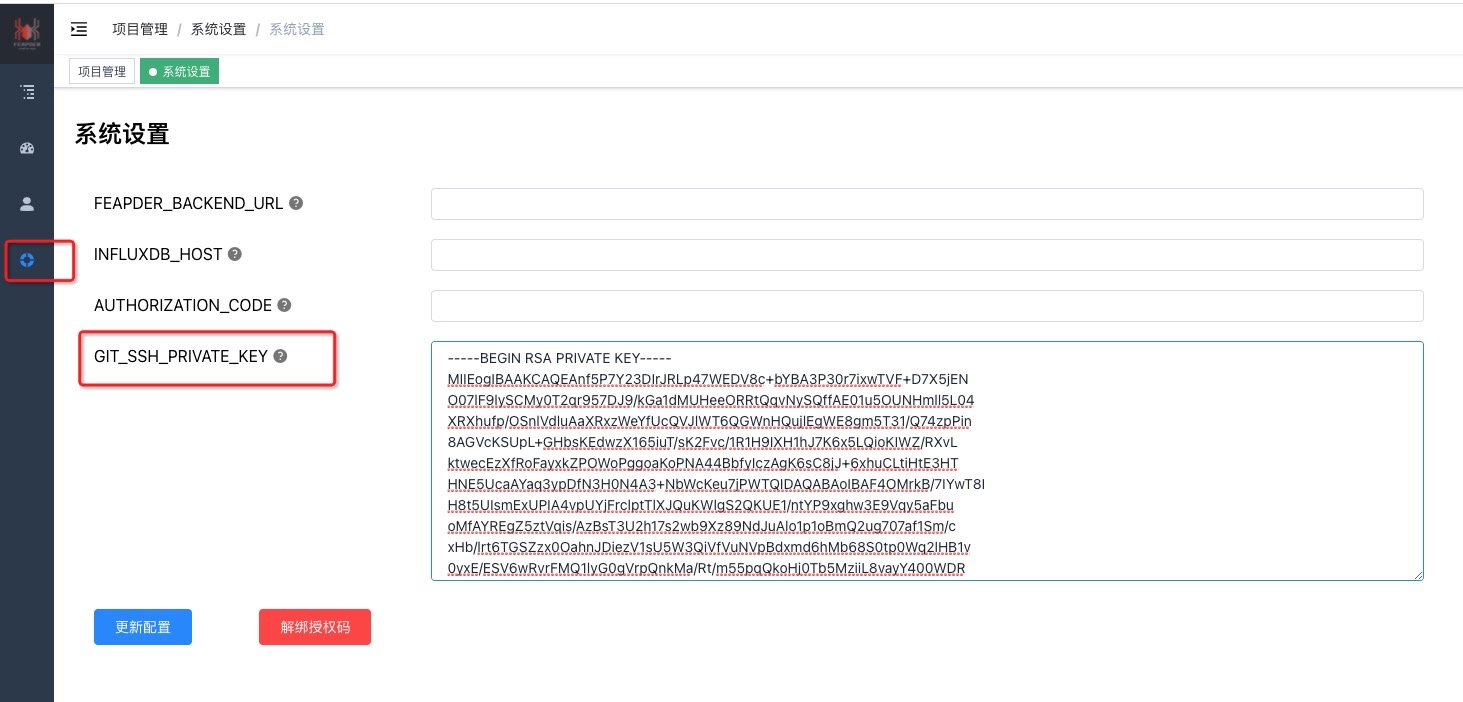

-或在系统设置页面配置您的SSH私钥,然后在git仓库里添加您的公钥,例如:

-

-

-注意,公私钥加密方式为RSA,其他的可能会有问题

-

-生成RSA公私钥方式如下:

-```shell

-ssh-keygen -t rsa -C "备注" -f 生成路径/文件名

-```

-如:

-`ssh-keygen -t rsa -C "feaplat" -f id_rsa`

-然后一路回车,不要输密码

-

-最终生成 `id_rsa`、`id_rsa.pub` 文件,复制`id_rsa.pub`文件内容到git仓库,复制`id_rsa`文件内容到feaplat爬虫管理系统

+见 [FEAPLAT使用说明](feapder_platform/usage)

## 自定义爬虫镜像

默认的爬虫镜像只打包了`feapder`、`scrapy`框架,若需要其它环境,可基于`.env`文件里的`SPIDER_IMAGE`镜像自行构建

-如将常用的python库打包到镜像

+如自定义python版本,安装常用的库等,需修改feaplat下的`feapder_dockerfile`

+

```

-FROM registry.cn-hangzhou.aliyuncs.com/feapderd/feapder:[最新版本号]

+# 基于最新的版本,若需要自定义python版本,则要求feapder版本号>=2.4

+FROM registry.cn-hangzhou.aliyuncs.com/feapderd/feapder:2.4

+

+# 安装自定义的python版本,3.10.8

+RUN set -ex \

+ && wget https://www.python.org/ftp/python/3.10.8/Python-3.10.8.tgz \

+ && tar -zxvf Python-3.10.8.tgz \

+ && cd Python-3.10.8 \

+ && ./configure prefix=/usr/local/python-3.10.8 \

+ && make \

+ && make install \

+ && make clean \

+ && rm -rf /Python-3.10.8* \

+ # 配置软链接

+ && ln -s /usr/local/python-3.10.8/bin/python3 /usr/bin/python3.10.8 \

+ && ln -s /usr/local/python-3.10.8/bin/pip3 /usr/bin/pip3.10.8

+

+# 删除之前的默认python版本

+RUN set -ex \

+ && rm -rf /usr/bin/python3 \

+ && rm -rf /usr/bin/pip3 \

+ && rm -rf /usr/bin/python \

+ && rm -rf /usr/bin/pip

+

+# 设置默认为python3.10.8

+RUN set -ex \

+ && ln -s /usr/local/python-3.10.8/bin/python3 /usr/bin/python \

+ && ln -s /usr/local/python-3.10.8/bin/python3 /usr/bin/python3 \

+ && ln -s /usr/local/python-3.10.8/bin/pip3 /usr/bin/pip \

+ && ln -s /usr/local/python-3.10.8/bin/pip3 /usr/bin/pip3

+

+# 将python3.10.8加入到环境变量

+ENV PATH=$PATH:/usr/local/python-3.10.8/bin/

# 安装依赖

RUN pip3 install feapder \

&& pip3 install scrapy

+

+# 安装node依赖包,内置的node为v10.15.3版本

+# RUN npm install packageName -g

```

-自己随便搞事情,搞完修改下 `.env`文件里的 SPIDER_IMAGE 的值即可

+改好后要打包镜像,打包命令:

+```

+docker build -f feapder_dockerfile -t 镜像名:版本号 .

+```

+如

+```

+docker build -f feapder_dockerfile -t my_feapder:1.0 .

+```

+

+打包好后修改下 `.env`文件里的 SPIDER_IMAGE 的值即可如:

+```

+SPIDER_IMAGE=my_feapder:1.0

+```

+注:

+1. 若有多个worker服务器,且没将镜像传到镜像服务,则需要手动将镜像推到其他服务器上,否则无法拉取此镜像运行

+2. 若自定义了python版本,则需要添加挂载,否则feaplat上自动安装的依赖库不会保留。挂载方式:修改`docker-compose.yaml`的 SPIDER_RUN_ARGS参数。如

+ ```

+ SPIDER_RUN_ARGS=["--mount type=volume,source=feapder_python3.10,destination=/usr/local/python-3.10.8"]

+ ```

## 价格

-| 类型 | 价格 | 说明 |

-|------|-----|-------------------------------|

-| 免费版 | 0元 | 可部署2个任务 |

-| 绑定版 | 188元 | 同一公网IP或机器码下永久使用 |

-| 非绑定版 | 288元 | 永久使用 |

+可免费部署20个任务,超出额度时,需购买授权码,在授权有效期内不限额度,可换绑服务器

+

+| 授权时长 | 价格 | 说明 |

+|------|------|---------------------|

+| 1个月 | 168元 | 无折扣|

+| 6个月| 666元 | 原价1008元,减免342元|

+| 1年 | 888元 | 原价2016元,减免1128元|

+| 2年 | 1500元 | 原价4032元,减免2532元|

-**所有版本功能一致,均可免费更新,永久使用**

+**删除任务不可恢复额度**

购买方式:添加微信 `boris_tm`

@@ -252,18 +411,18 @@ RUN pip3 install feapder \

## 学习交流

-

-

- | 知识星球:17321694 |

- 作者微信: boris_tm |

- QQ群号:750614606 |

-

-

+

+

+ | 知识星球:17321694 |

+ 作者微信: boris_tm |

+ QQ群号:521494615 |

+

+

-

- |

-  |

-  |

-

-

-

- 加好友备注:feaplat

+

+  |

+  |

+

+

+

+ 加好友备注:feapder

diff --git a/docs/feapder_platform/feaplat_bak.md b/docs/feapder_platform/feaplat_bak.md

new file mode 100644

index 00000000..87333075

--- /dev/null

+++ b/docs/feapder_platform/feaplat_bak.md

@@ -0,0 +1,288 @@

+# 爬虫管理系统 - FEAPLAT

+

+> 生而为虫,不止于虫

+

+**feaplat**命名源于 feapder 与 platform 的缩写

+

+读音: `[ˈfiːplæt] `

+

+

+

+## 特性

+

+1. 支持任何python脚本,包括不限于`feapder`、`scrapy`

+2. 支持浏览器渲染,支持有头模式。浏览器支持`playwright`、`selenium`

+3. 支持部署服务,可自动负载均衡

+4. 支持服务器集群管理

+5. 支持监控,监控内容可自定义

+6. 支持起多个实例,如分布式爬虫场景

+7. 支持弹性伸缩

+8. 支持4种定时启动方式

+9. 支持自定义worker镜像,如自定义java的运行环境、机器学习环境等,即根据自己的需求自定义(feaplat分为`master-调度端`和`worker-运行任务端`)

+10. docker一键部署,架设在docker swarm集群上

+

+

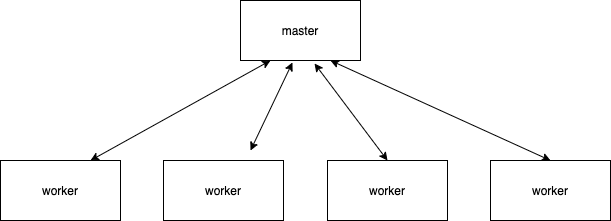

+## 为什么用feaplat爬虫管理系统

+

+**市面上的爬虫管理系统**

+

+

+

+worker节点常驻,且运行多个任务,不能弹性伸缩,任务之前会相互影响,稳定性得不到保障

+

+**feaplat爬虫管理系统**

+

+

+

+worker节点根据任务动态生成,一个worker只运行一个任务实例,任务做完worker销毁,稳定性高;多个服务器间自动均衡分配,弹性伸缩

+

+

+## 功能概览

+

+### 1. 项目管理

+

+添加/编辑项目

+

+

+### 2. 任务管理

+

+

+

+

+### 3. 任务实例

+

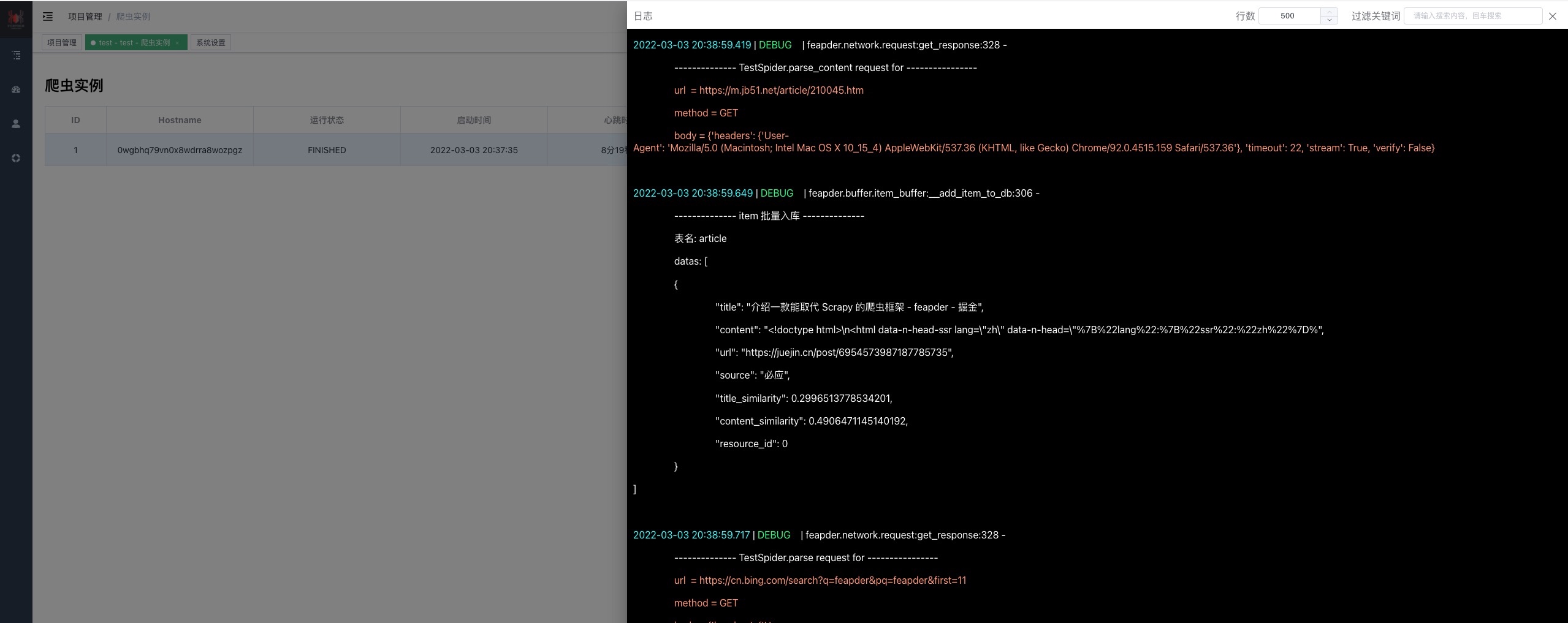

+日志

+

+

+

+### 4. 爬虫监控

+

+feaplat支持对feapder爬虫的运行情况进行监控,除了数据监控和请求监控外,用户还可自定义监控内容,详情参考[自定义监控](source_code/监控打点?id=自定义监控)

+

+若scrapy爬虫或其他python脚本使用监控功能,也可通过自定义监控的功能来支持,详情参考[自定义监控](source_code/监控打点?id=自定义监控)

+

+注:需 feapder>=1.6.6

+

+

+

+

+

+## 部署

+

+> 下面部署以centos为例, 其他平台docker安装方式可参考docker官方文档:https://docs.docker.com/compose/install/

+

+### 1. 安装docker

+

+删除旧版本(可选,需要重装升级时执行)

+

+```shell

+yum remove docker docker-common docker-selinux docker-engine

+```

+

+安装:

+```shell

+yum install -y yum-utils device-mapper-persistent-data lvm2 && python2 /usr/bin/yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo && yum install docker-ce -y

+```

+国内用户推荐使用

+```shell

+yum install -y yum-utils device-mapper-persistent-data lvm2 && python2 /usr/bin/yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo && yum install docker-ce -y

+```

+或者使用国内 daocloud 一键安装命令

+```

+curl -sSL https://get.daocloud.io/docker | sh

+```

+

+

+

+启动

+```shell

+systemctl enable docker

+systemctl start docker

+```

+

+### 2. 安装 docker swarm

+

+ docker swarm init

+

+ # 如果你的 Docker 主机有多个网卡,拥有多个 IP,必须使用 --advertise-addr 指定 IP

+ docker swarm init --advertise-addr 192.168.99.100

+

+### 3. 安装docker-compose

+

+```shell

+sudo curl -L "https://github.com/docker/compose/releases/download/1.29.2/docker-compose-$(uname -s)-$(uname -m)" -o /usr/local/bin/docker-compose

+sudo chmod +x /usr/local/bin/docker-compose

+```

+国内用户推荐使用

+```shell

+sudo curl -L "https://get.daocloud.io/docker/compose/releases/download/1.29.2/docker-compose-$(uname -s)-$(uname -m)" -o /usr/local/bin/docker-compose

+sudo chmod +x /usr/local/bin/docker-compose

+```

+

+### 4. 部署feaplat爬虫管理系统

+#### 预备项

+安装git(1.8.3的版本已够用)

+```shell

+yum -y install git

+```

+#### 1. 下载项目

+

+gitub

+```shell

+git clone https://github.com/Boris-code/feaplat.git

+```

+gitee

+```shell

+git clone https://gitee.com/Boris-code/feaplat.git

+```

+

+#### 2. 运行

+

+首次运行需拉取镜像,时间比较久,且运行可能会报错,再次运行下就好了

+

+```shell

+cd feaplat

+docker-compose up -d

+```

+

+- 若端口冲突,可修改.env文件,参考[常见问题](feapder_platform/question?id=修改端口)

+

+#### 3. 访问爬虫管理系统

+

+默认地址:`http://localhost`

+默认账密:admin / admin

+

+- 若未成功,参考[常见问题](feapder_platform/question)

+- 使用说明,参考[使用说明](feapder_platform/usage)

+

+#### 4. 停止(可选)

+

+```shell

+docker-compose stop

+```

+

+### 5. 添加服务器(可选)

+

+> 用于搭建集群,扩展爬虫(worker)节点服务器

+

+#### 1. 安装docker

+

+参考部署步骤1

+

+#### 2. 部署

+

+在master服务器(feaplat爬虫管理系统所在服务器)执行下面命令,查看token

+

+```shell

+docker swarm join-token worker

+```

+

+输出举例如下

+

+```shell

+docker swarm join --token SWMTKN-1-1mix1x7noormwig1pjqzmrvgnw2m8zxqdzctqa8t3o8s25fjgg-9ot0h1gatxfh0qrxiee38xxxx 172.17.5.110:2377

+```

+

+**在需扩充的服务器上执行**

+

+```shell

+docker swarm join --token [token] [ip]

+```

+

+若服务器彼此之间不是内网,为公网环境,则需要将ip改成公网,且开放端口2377

+

+开启并检查2377端口

+```shell

+firewall-cmd --zone=public --add-port=2377/tcp --permanent

+firewall-cmd --reload

+firewall-cmd --query-port=2377/tcp

+```

+

+#### 3. 验证是否成功

+

+在master服务器(feaplat爬虫管理系统所在服务器)执行下面命令

+

+```shell

+docker node ls

+```

+

+若打印结果包含刚加入的服务器,则添加服务器成功

+

+#### 4. 下线服务器(可选)

+

+在需要下线的服务器上执行

+

+```shell

+docker swarm leave

+```

+

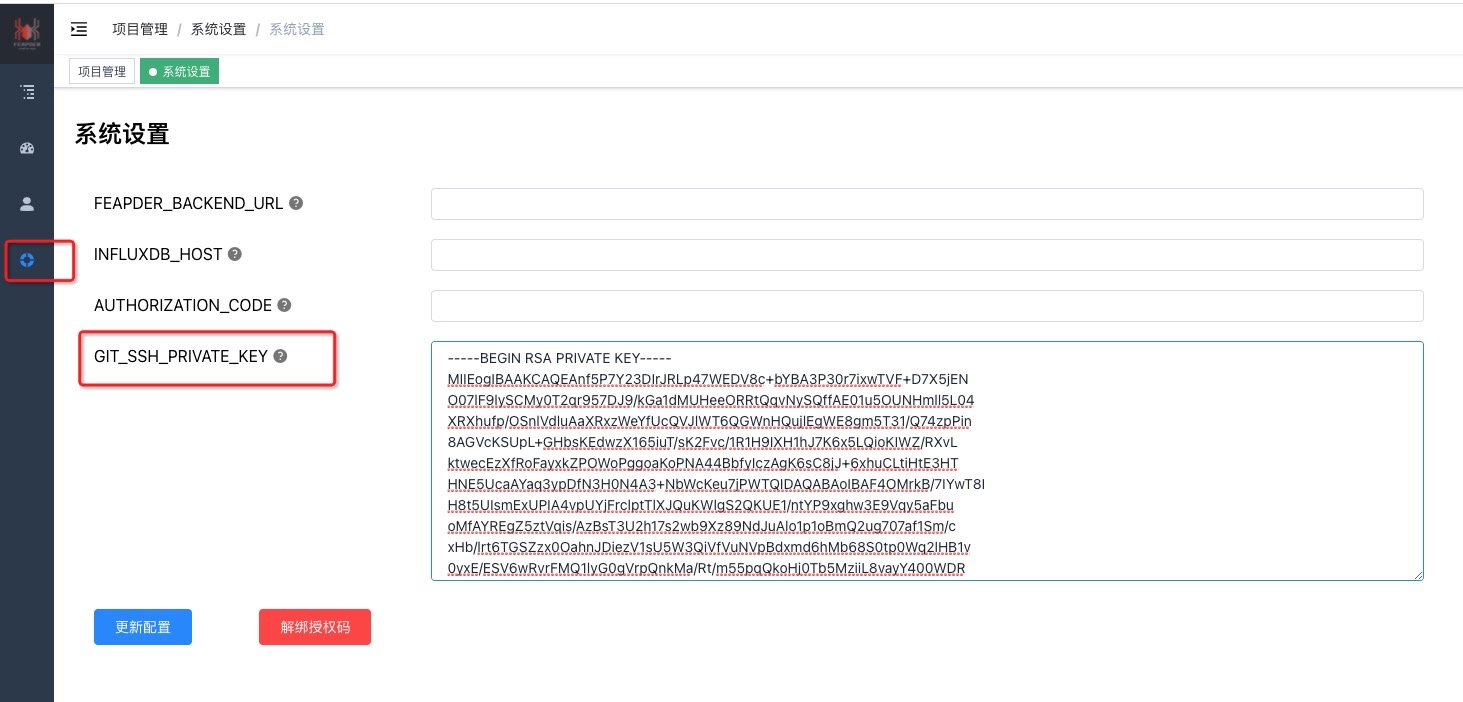

+## 拉取私有项目

+

+拉取私有项目需在git仓库里添加如下公钥

+

+```

+ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQCd/k/tjbcMislEunjtYQNXxz5tgEDc/fSvuLHBNUX4PtfmMQ07TuUX2XJIIzLRPaqv3nsMn3+QZrV0xQd545FG1Cq83JJB98ATTW7k5Q0eaWXkvThdFeG5+n85KeVV2W4BpdHHNZ5h9RxBUmVZPpAZacdC6OUSBYTyCblPfX9DvjOk+KfwAZVwpJSkv4YduwoR3DNfXrmK5P+wrYW9z/VHUf0hcfWEnsrrHktCKgohZn9Fe8uS3B5wTNd9GgVrLGRk85ag+CChoqg80DjgFt/IhzMCArqwLyMn7rGG4Iu2Ie0TcdMc0TlRxoBhqrfKkN83cfQ3gDf41tZwp67uM9ZN feapder@qq.com

+```

+

+或在系统设置页面配置您的SSH私钥,然后在git仓库里添加您的公钥,例如:

+

+

+注意,公私钥加密方式为RSA,其他的可能会有问题

+

+生成RSA公私钥方式如下:

+```shell

+ssh-keygen -t rsa -C "备注" -f 生成路径/文件名

+```

+如:

+`ssh-keygen -t rsa -C "feaplat" -f id_rsa`

+然后一路回车,不要输密码

+

+最终生成 `id_rsa`、`id_rsa.pub` 文件,复制`id_rsa.pub`文件内容到git仓库,复制`id_rsa`文件内容到feaplat爬虫管理系统

+

+## 自定义爬虫镜像

+

+默认的爬虫镜像只打包了`feapder`、`scrapy`框架,若需要其它环境,可基于`.env`文件里的`SPIDER_IMAGE`镜像自行构建

+

+如将常用的python库打包到镜像

+```

+FROM registry.cn-hangzhou.aliyuncs.com/feapderd/feapder:[最新版本号]

+

+# 安装依赖

+RUN pip3 install feapder \

+ && pip3 install scrapy

+

+```

+

+自己随便搞事情,搞完修改下 `.env`文件里的 SPIDER_IMAGE 的值即可

+

+

+## 价格

+

+| 类型 | 价格 | 说明 |

+|------|------|---------------------|

+| 试用版 | 0元 | 可部署20个任务,删除任务不可恢复额度 |

+| 正式版 | 888元 | 有效期一年,可换绑服务器 |

+

+**部署后默认为试用版,购买授权码后配置到系统里即为正式版**

+

+购买方式:添加微信 `boris_tm`

+

+随着功能的完善,价格会逐步调整

+

+## 学习交流

+

+

+

+ | 知识星球:17321694 |

+ 作者微信: boris_tm |

+ QQ群号:750614606 |

+

+

+  +

+ |

+  |

+  |

+

+

+

+ 加好友备注:feaplat

diff --git a/docs/feapder_platform/question.md b/docs/feapder_platform/question.md

index 9b59ee6c..78de0f2f 100644

--- a/docs/feapder_platform/question.md

+++ b/docs/feapder_platform/question.md

@@ -52,8 +52,14 @@ INFLUXDB_PORT_UDP=8089

1. 查看后端日志,观察报错

1. 若是docker版本问题,参考部署一节安装最新版本,

2. 若是报 `This node is not a swarm manager`,则是部署环境没准备好,执行`docker swarm init`,可参考参考部署一节

-2. 查看镜像`docker images`,若不存在爬虫镜像`registry.cn-hangzhou.aliyuncs.com/feapderd/feapder`,可能自动拉取失败了,可手动拉取,拉取命令:`docker pull registry.cn-hangzhou.aliyuncs.com/feapderd/feapder:版本号`,版本号在`.env`里查看

-3. 重启docker服务,Centos对应的命令为:`service docker restart`,其他自行查资料

+2. 查看worker状态:

+ ```

+ docker service ps task_任务id --no-trunc

+ ```

+ 看看error信息

+

+4. 查看镜像`docker images`,若不存在爬虫镜像`registry.cn-hangzhou.aliyuncs.com/feapderd/feapder`,可能自动拉取失败了,可手动拉取,拉取命令:`docker pull registry.cn-hangzhou.aliyuncs.com/feapderd/feapder:版本号`,版本号在`.env`里查看

+5. 重启docker服务,Centos对应的命令为:`service docker restart`,其他自行查资料

## 依赖包安装失败,可手动安装包

@@ -88,7 +94,62 @@ INFLUXDB_PORT_UDP=8089

rm -f /etc/localtime

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

-# 校对时间

+# 校对时间 方式1

clock --hctosys

+# 校对时间 方式2

+ntpdate 0.asia.pool.ntp.org

```

-

\ No newline at end of file

+

+## 我搭建了个集群,如何让主节点不跑任务

+

+在主节点上执行下面命令,将其设置成drain状态即可

+

+ docker node update --availability drain 节点id

+

+ ## Network 问题

+

+attaching to network failed, make sure your network options are correct and check manager logs: context deadline exceeded

+

+

+1. 确定当前节点是不是Drain节点:docker node ls

+

+

+

+ 是则继续往下看,不是则在评论区留言

+

+1. 修复

+

+ ```

+ docker node update --availability active 节点id

+ docker node update --availability drain 节点id

+ ```

+

+原因是Drain节点,不能为其分配网络资源,需要先改成active,然后启动,之后在改回drain

+

+**若不是以上情况,可能是network内的可分配的ip满了(老版本feaplat会有这个问题),那么可继续往下看**

+

+1. 先检查feaplat目录下的docker-compost.yaml,翻到最后,看network相关配置是否为如下。若不是,则改成下面这样的。若下面指定的11 ip段和主机有冲突,可以写12、13等

+

+ ```

+ networks:

+ default:

+ name: feaplat

+ driver: overlay

+ attachable: true

+ ipam:

+ config:

+ - subnet: 11.0.0.0/8

+ gateway: 11.0.0.1

+ ```

+

+ 完整配置见:https://github.com/Boris-code/feaplat/blob/develop/docker-compose.yaml

+

+

+2. 改完后,需要删除之前的network,使其重新创建,命令如下:

+

+ ```

+ docker service ls -q | xargs docker service rm # 注意 这个会停止掉所有任务。

+ docker network rm feaplat # 删除网络

+ docker compose rm # 删除之前feaplat运行环境

+ docker compose up -d # 启动

+ ```

\ No newline at end of file

diff --git a/docs/feapder_platform/usage.md b/docs/feapder_platform/usage.md

index 100cd423..20e7bb12 100644

--- a/docs/feapder_platform/usage.md

+++ b/docs/feapder_platform/usage.md

@@ -31,7 +31,7 @@

1. 准备项目,项目结构如下:

-2. 压缩后上传:

+2. 压缩后上传:(推荐使用 `feapder zip` 命令压缩)

- 工作路径:上传的项目会被放到docker里的根目录下(跟你本机项目路径没关系),然后解压运行。因`feapder_demo.zip`解压后为`feapder_demo`,所以工作路径配置`/feapder_demo`

- 本项目没依赖,可以不配置`requirements.txt`

@@ -44,6 +44,30 @@

可以看到已经运行完毕

+

+## git方式拉取私有项目

+

+拉取私有项目需在git仓库里添加如下公钥

+

+```

+ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQCd/k/tjbcMislEunjtYQNXxz5tgEDc/fSvuLHBNUX4PtfmMQ07TuUX2XJIIzLRPaqv3nsMn3+QZrV0xQd545FG1Cq83JJB98ATTW7k5Q0eaWXkvThdFeG5+n85KeVV2W4BpdHHNZ5h9RxBUmVZPpAZacdC6OUSBYTyCblPfX9DvjOk+KfwAZVwpJSkv4YduwoR3DNfXrmK5P+wrYW9z/VHUf0hcfWEnsrrHktCKgohZn9Fe8uS3B5wTNd9GgVrLGRk85ag+CChoqg80DjgFt/IhzMCArqwLyMn7rGG4Iu2Ie0TcdMc0TlRxoBhqrfKkN83cfQ3gDf41tZwp67uM9ZN feapder@qq.com

+```

+

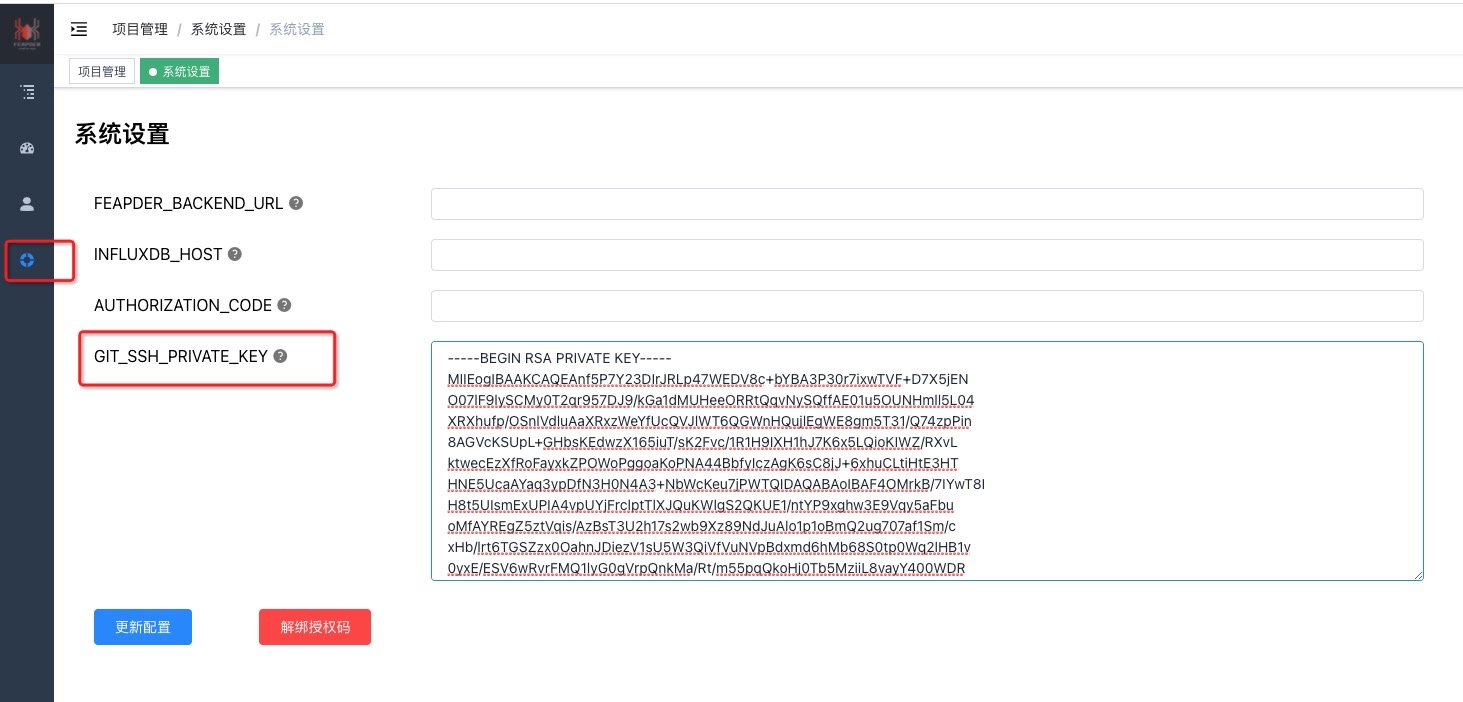

+或在系统设置页面配置您的SSH私钥,然后在git仓库里添加您的公钥,例如:

+

+

+注意,公私钥加密方式为RSA,其他的可能会有问题

+

+生成RSA公私钥方式如下:

+```shell

+ssh-keygen -t rsa -C "备注" -f 生成路径/文件名

+```

+如:

+`ssh-keygen -t rsa -C "feaplat" -f id_rsa`

+然后一路回车,不要输密码

+

+最终生成 `id_rsa`、`id_rsa.pub` 文件,复制`id_rsa.pub`文件内容到git仓库,复制`id_rsa`文件内容到feaplat爬虫管理系统

+

## 爬虫监控

diff --git a/docs/images/aliyun_sale.jpg b/docs/images/aliyun_sale.jpg

deleted file mode 100644

index f7b42b1a..00000000

Binary files a/docs/images/aliyun_sale.jpg and /dev/null differ

diff --git a/docs/images/qingguo.jpg b/docs/images/qingguo.jpg

new file mode 100644

index 00000000..24331df2

Binary files /dev/null and b/docs/images/qingguo.jpg differ

diff --git a/docs/index.html b/docs/index.html

index 75f1c322..d1112896 100644

--- a/docs/index.html

+++ b/docs/index.html

@@ -2,160 +2,171 @@

-

- feapder-document

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

+

+ feapder官方文档|feapder-document

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

+

+

+

+

+

+

+

+

+

-

-

-

-

-

-

-

-

-

-

-

-

+ -->

+

+

+

+

+

+

+

+

+

+

+

+

+

+

diff --git a/docs/lib/docsify-copy-code/docsify-copy-code.min.js b/docs/lib/docsify-copy-code/docsify-copy-code.min.js

new file mode 100644

index 00000000..dee84c79

--- /dev/null

+++ b/docs/lib/docsify-copy-code/docsify-copy-code.min.js

@@ -0,0 +1,9 @@

+/*!

+ * docsify-copy-code

+ * v2.1.0

+ * https://github.com/jperasmus/docsify-copy-code

+ * (c) 2017-2019 JP Erasmus

+ * MIT license

+ */

+!function(){"use strict";function r(o){return(r="function"==typeof Symbol&&"symbol"==typeof Symbol.iterator?function(o){return typeof o}:function(o){return o&&"function"==typeof Symbol&&o.constructor===Symbol&&o!==Symbol.prototype?"symbol":typeof o})(o)}!function(o,e){void 0===e&&(e={});var t=e.insertAt;if(o&&"undefined"!=typeof document){var n=document.head||document.getElementsByTagName("head")[0],c=document.createElement("style");c.type="text/css","top"===t&&n.firstChild?n.insertBefore(c,n.firstChild):n.appendChild(c),c.styleSheet?c.styleSheet.cssText=o:c.appendChild(document.createTextNode(o))}}(".docsify-copy-code-button,.docsify-copy-code-button span{cursor:pointer;transition:all .25s ease}.docsify-copy-code-button{position:absolute;z-index:1;top:0;right:0;overflow:visible;padding:.65em .8em;border:0;border-radius:0;outline:0;font-size:1em;background:grey;background:var(--theme-color,grey);color:#fff;opacity:0}.docsify-copy-code-button span{border-radius:3px;background:inherit;pointer-events:none}.docsify-copy-code-button .error,.docsify-copy-code-button .success{position:absolute;z-index:-100;top:50%;left:0;padding:.5em .65em;font-size:.825em;opacity:0;-webkit-transform:translateY(-50%);transform:translateY(-50%)}.docsify-copy-code-button.error .error,.docsify-copy-code-button.success .success{opacity:1;-webkit-transform:translate(-115%,-50%);transform:translate(-115%,-50%)}.docsify-copy-code-button:focus,pre:hover .docsify-copy-code-button{opacity:1}"),document.querySelector('link[href*="docsify-copy-code"]')&&console.warn("[Deprecation] Link to external docsify-copy-code stylesheet is no longer necessary."),window.DocsifyCopyCodePlugin={init:function(){return function(o,e){o.ready(function(){console.warn("[Deprecation] Manually initializing docsify-copy-code using window.DocsifyCopyCodePlugin.init() is no longer necessary.")})}}},window.$docsify=window.$docsify||{},window.$docsify.plugins=[function(o,s){o.doneEach(function(){var o=Array.apply(null,document.querySelectorAll("pre[data-lang]")),c={buttonText:"Copy to clipboard",errorText:"Error",successText:"Copied"};s.config.copyCode&&Object.keys(c).forEach(function(t){var n=s.config.copyCode[t];"string"==typeof n?c[t]=n:"object"===r(n)&&Object.keys(n).some(function(o){var e=-1',''.concat(c.buttonText,""),''.concat(c.errorText,""),''.concat(c.successText,""),""].join("");o.forEach(function(o){o.insertAdjacentHTML("beforeend",e)})}),o.mounted(function(){document.querySelector(".content").addEventListener("click",function(o){if(o.target.classList.contains("docsify-copy-code-button")){var e="BUTTON"===o.target.tagName?o.target:o.target.parentNode,t=document.createRange(),n=e.parentNode.querySelector("code"),c=window.getSelection();t.selectNode(n),c.removeAllRanges(),c.addRange(t);try{document.execCommand("copy")&&(e.classList.add("success"),setTimeout(function(){e.classList.remove("success")},1e3))}catch(o){console.error("docsify-copy-code: ".concat(o)),e.classList.add("error"),setTimeout(function(){e.classList.remove("error")},1e3)}"function"==typeof(c=window.getSelection()).removeRange?c.removeRange(t):"function"==typeof c.removeAllRanges&&c.removeAllRanges()}})})}].concat(window.$docsify.plugins||[])}();

+//# sourceMappingURL=docsify-copy-code.min.js.map

diff --git "a/docs/question/setting\344\270\215\347\224\237\346\225\210\351\227\256\351\242\230.md" "b/docs/question/setting\344\270\215\347\224\237\346\225\210\351\227\256\351\242\230.md"

new file mode 100644

index 00000000..0a443c97

--- /dev/null

+++ "b/docs/question/setting\344\270\215\347\224\237\346\225\210\351\227\256\351\242\230.md"

@@ -0,0 +1,38 @@

+# setting不生效问题

+

+## 问题

+

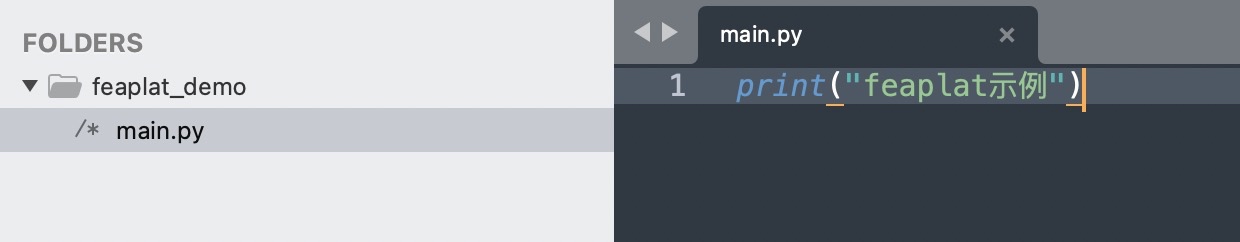

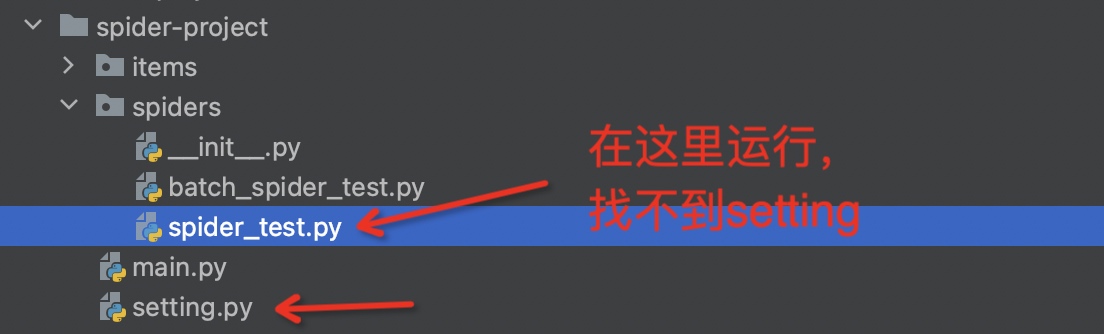

+以下面这个项目结构为例,在`spiders`目录下运行`spider_test.py`读取不到`setting.py`,所以`setting`的配置不生效。

+

+

+

+读取不到是因为python的环境变量问题,在spiders目录下运行,只会找spides目录下的文件

+

+## 解决方式

+

+### 方法1:在setting同级目录下运行

+

+在main.py中导入spider_test, 然后运行main.py

+

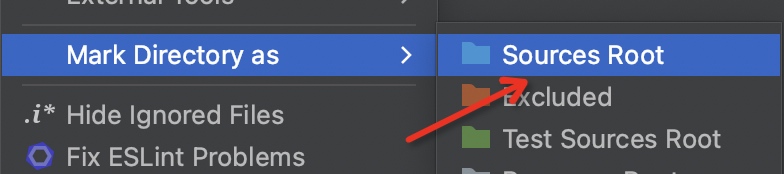

+### 方法2:设置工作区间

+

+设置工作区间方式(以pycharm为例):项目->右键->Mark Directory as -> Sources Root

+

+

+

+### 方法3:设置PYTHONPATH

+

+以mac或linux举例,执行如下命令

+

+```shell

+export PYTHONPATH=$PYTHONPATH:/绝对路径/spider-project

+```

+注:这个命令设置的环境变量只在当前终端有效

+

+然后即可在spiders目录下运行

+

+```shell

+python spider_test.py

+```

+

+window如何添加环境变量大家自行探索,搞定了可在评论区留言

\ No newline at end of file

diff --git "a/docs/question/\350\277\220\350\241\214\351\227\256\351\242\230.md" "b/docs/question/\350\277\220\350\241\214\351\227\256\351\242\230.md"

index cbc84e3b..ade03f4d 100644

--- "a/docs/question/\350\277\220\350\241\214\351\227\256\351\242\230.md"

+++ "b/docs/question/\350\277\220\350\241\214\351\227\256\351\242\230.md"

@@ -21,7 +21,7 @@

delete_keys为需要删除的key,类型: 元组/bool/string,支持正则; 常用于清空任务队列,否则重启时会断点续爬,如写成`delete_keys=True`也是可以的

-1. 手动修改任务分数为小于当前时间搓的分数

+1. 手动修改任务分数为小于当前时间戳的分数

diff --git a/docs/source_code/Item.md b/docs/source_code/Item.md

index 3aafe547..e48218b9 100644

--- a/docs/source_code/Item.md

+++ b/docs/source_code/Item.md

@@ -102,6 +102,26 @@ class SpiderDataItem(Item):

self.title = self.title.strip()

```

+## 指定入库使用的pipelines

+

+```python

+

+from feapder import Item

+from feapder.pipelines.csv_pipeline import CsvPipeline

+

+

+class SpiderDataItem(Item):

+

+ __pipelines__ = [CsvPipeline()]

+

+ def __init__(self, *args, **kwargs):

+ # self.id = None

+ self.title = None

+```

+

+使用__pipelines__指定后,该item只会流经指定的pipelines处理

+

+

## 更新数据

采集过程中,往往会有些数据漏采或解析出错,如果我们想更新已入库的数据,可将Item转为UpdateItem

diff --git a/docs/source_code/Response.md b/docs/source_code/Response.md

index d769a484..0fa80e60 100644

--- a/docs/source_code/Response.md

+++ b/docs/source_code/Response.md

@@ -145,13 +145,39 @@ response.open()

这个函数会打开浏览器,渲染下载内容,方便查看下载内容是否与数据源一致

-### 11. 将普通response转为feapder.Response

+### 11. 更新response.text的值

+

+```

+response.text = ""

+```

+常用于浏览器渲染模式,如页面有变化,可以取最新的页面内容更新到response.text里,然后使用response的选择器提取内容

+

+### 12. 将普通response转为feapder.Response

```

response = feapder.Response(response)

```

-### 12. 序列化与反序列化

+### 13. 将源码转为feapder.Response

+

+```

+response = feapder.Response.from_text(text=html, url="", cookies={}, headers={})

+```

+

+url是网页的地址,用来将html里的链接转为绝对链接,若不提供,则无法转换

+

+示例:

+```

+import feapder

+

+html = "hello word"

+response = feapder.Response.from_text(text=html, url="https://www.feapder.com", cookies={}, headers={})

+print(response.xpath("//a/@href").extract_first())

+

+输出:https://www.feapder.com/666

+```

+

+### 14. 序列化与反序列化

序列化

@@ -160,6 +186,7 @@ response = feapder.Response(response)

反序列化

feapder.Response.from_dict(response_dict)

+

### 其他

diff --git "a/docs/source_code/Spider\350\277\233\351\230\266.md" "b/docs/source_code/Spider\350\277\233\351\230\266.md"

index c99608b3..215898a8 100644

--- "a/docs/source_code/Spider\350\277\233\351\230\266.md"

+++ "b/docs/source_code/Spider\350\277\233\351\230\266.md"

@@ -46,9 +46,9 @@ redis_key为redis中存储任务等信息的key前缀,如redis_key="feapder:sp

key的命名方式为[配置文件](source_code/配置文件.md)中定义的

# 任务表模版

- TAB_REQUSETS = "{redis_key}:z_requsets"

+ TAB_REQUESTS = "{redis_key}:z_requsets"

# 任务失败模板

- TAB_FAILED_REQUSETS = "{redis_key}:z_failed_requsets"

+ TAB_FAILED_REQUESTS = "{redis_key}:z_failed_requsets"

# 爬虫状态表模版

TAB_SPIDER_STATUS = "{redis_key}:z_spider_status"

# item 表模版

diff --git a/docs/source_code/UpdateItem.md b/docs/source_code/UpdateItem.md

index a461fad4..3036628a 100644

--- a/docs/source_code/UpdateItem.md

+++ b/docs/source_code/UpdateItem.md

@@ -1,6 +1,6 @@

# UpdateItem

-UpdateItem用于更新数据,继承至Item,所以使用方式基本与Item一致,下载只说不同之处

+UpdateItem用于更新数据,继承至Item,所以使用方式基本与Item一致,下面只说不同之处

## 更新逻辑

@@ -70,4 +70,4 @@ item = item.to_UpdateItem()

item.update_key = "title"

```

-**推荐方式1,直接改Item类,不用修改爬虫代码**

\ No newline at end of file

+**推荐方式1,直接改Item类,不用修改爬虫代码**

diff --git a/docs/source_code/custom_downloader.md b/docs/source_code/custom_downloader.md

new file mode 100644

index 00000000..eb7c8c05

--- /dev/null

+++ b/docs/source_code/custom_downloader.md

@@ -0,0 +1,300 @@

+# 自定义下载器

+

+下载器一共分为三种:**普通下载器**、**支持保持session的下载器**以及**浏览器渲染下载器**。默认已经在框架中内置,setting中的配置如下

+

+```

+DOWNLOADER = "feapder.network.downloader.RequestsDownloader" # 请求下载器

+SESSION_DOWNLOADER = "feapder.network.downloader.RequestsSessionDownloader"

+RENDER_DOWNLOADER = "feapder.network.downloader.SeleniumDownloader" # 渲染下载器

+```

+

+- session下载器当配置中`USE_SESSION = True`时会启用

+- 渲染下载器当使用浏览器下载功能时会启用

+

+这些下载器均为插件的形式,我们可以自定义

+

+## 自定义普通下载器

+

+1. 编写下载器。如在 `xxx-spider/downloader/my_downloader.py `下自定义了如下下载器

+

+ ```

+ import requests

+

+ from feapder.network.downloader.base import Downloader

+ from feapder.network.response import Response

+

+ class RequestsDownloader(Downloader):

+ def download(self, request) -> Response:

+ response = requests.request(

+ request.method, request.url, **request.requests_kwargs

+ )

+ # 将requests的response转化为feapder的Response 对象,方便后续解析时使用xpath、re等方法

+ response = Response(response)

+ return response

+ ```

+

+ 注:这里返回的response对象不强制要求为是feapder的Response。返回值会传到解析函数的response参数里,若返回的是文本,则接收到的也是文本。

+

+ 但为了代码可读性,建议将返回值转为feapder的Response后再返回。

+

+ 转feapder的Response的方式有如下几种

+

+ ```

+ # 方式1

+ # response参数为reqeusts的response

+ Response(response)

+

+ # 方式2

+ Response.from_text(text="html内容")

+ ```

+

+2. 在settings中指定下载器

+

+ ```

+ DOWNLOADER = "downloader.my_downloader.RequestsDownloader"

+ ```

+

+## 自定义session下载器

+

+1. 和普通下载器一样,都是继承`Downloader`,如何保持session,可自定义。代码示例 `xxx-spider/downloader/my_downloader.py `

+

+ ```

+ class RequestsSessionDownloader(Downloader):

+ session = None

+

+ @property

+ def _session(self):

+ if not self.__class__.session:

+ self.__class__.session = requests.Session()

+ # pool_connections – 缓存的 urllib3 连接池个数 pool_maxsize – 连接池中保存的最大连接数

+ http_adapter = HTTPAdapter(pool_connections=1000, pool_maxsize=1000)

+ # 任何使用该session会话的 HTTP 请求,只要其 URL 是以给定的前缀开头,该传输适配器就会被使用到。

+ self.__class__.session.mount("http", http_adapter)

+

+ return self.__class__.session

+

+ def download(self, request) -> Response:

+ response = self._session.request(

+ request.method, request.url, **request.requests_kwargs

+ )

+ response = Response(response)

+ return response

+ ```

+

+2. 在settings中指定下载器

+

+ ```

+ SESSION_DOWNLOADER = "downloader.my_downloader.RequestsSessionDownloader"

+ ```

+

+注意,这里要配置 `SESSION_DOWNLOADER`

+

+## 自定义浏览器渲染下载器

+

+1. 编写下载器 `xxx-spider/downloader/my_downloader.py `

+

+**若浏览器框架本身不支持多线程,但想在多线程中使用,如playwright使用,参考如下:**

+

+```

+import feapder.setting as setting

+import feapder.utils.tools as tools

+from feapder.network.downloader.base import RenderDownloader

+from feapder.network.response import Response

+from feapder.utils.webdriver import WebDriverPool, PlaywrightDriver

+

+

+class MyDownloader(RenderDownloader):

+ webdriver_pool: WebDriverPool = None

+

+ @property

+ def _webdriver_pool(self):

+ if not self.__class__.webdriver_pool:

+ self.__class__.webdriver_pool = WebDriverPool(

+ **setting.PLAYWRIGHT, driver_cls=PlaywrightDriver, thread_safe=True

+ )

+

+ return self.__class__.webdriver_pool

+

+ def download(self, request) -> Response:

+ # 代理优先级 自定义 > 配置文件 > 随机

+ if request.custom_proxies:

+ proxy = request.get_proxy()

+ elif setting.PLAYWRIGHT.get("proxy"):

+ proxy = setting.PLAYWRIGHT.get("proxy")

+ else:

+ proxy = request.get_proxy()

+

+ # user_agent优先级 自定义 > 配置文件 > 随机

+ if request.custom_ua:

+ user_agent = request.get_user_agent()

+ elif setting.PLAYWRIGHT.get("user_agent"):

+ user_agent = setting.PLAYWRIGHT.get("user_agent")

+ else:

+ user_agent = request.get_user_agent()

+

+ cookies = request.get_cookies()

+ url = request.url

+ render_time = request.render_time or setting.PLAYWRIGHT.get("render_time")

+ wait_until = setting.PLAYWRIGHT.get("wait_until") or "domcontentloaded"

+ if request.get_params():

+ url = tools.joint_url(url, request.get_params())

+

+ driver: PlaywrightDriver = self._webdriver_pool.get(

+ user_agent=user_agent, proxy=proxy

+ )

+ try:

+ if cookies:

+ driver.url = url

+ driver.cookies = cookies

+ driver.page.goto(url, wait_until=wait_until)

+

+ if render_time:

+ tools.delay_time(render_time)

+

+ html = driver.page.content()

+ response = Response.from_dict(

+ {

+ "url": driver.page.url,

+ "cookies": driver.cookies,

+ "_content": html.encode(),

+ "status_code": 200,

+ "elapsed": 666,

+ "headers": {

+ "User-Agent": driver.user_agent,

+ "Cookie": tools.cookies2str(driver.cookies),

+ },

+ }

+ )

+

+ response.driver = driver

+ response.browser = driver

+ return response

+ except Exception as e:

+ self._webdriver_pool.remove(driver)

+ raise e

+

+ def close(self, driver):

+ if driver:

+ self._webdriver_pool.remove(driver)

+

+ def put_back(self, driver):

+ """

+ 释放浏览器对象

+ """

+ self._webdriver_pool.put(driver)

+

+ def close_all(self):

+ """

+ 关闭所有浏览器

+ """

+ # 不支持

+ # self._webdriver_pool.close()

+ pass

+```

+

+这里使用了WebDriverPool,参数`thread_safe=True`,即要保证使用时的线程安全,确保同个浏览器对象只能被同一个线程调用

+

+**若浏览器框架本身支持多线程,如selenium,则参考如下**

+

+```

+import feapder.setting as setting

+import feapder.utils.tools as tools

+from feapder.network.downloader.base import RenderDownloader

+from feapder.network.response import Response

+from feapder.utils.webdriver import WebDriverPool, SeleniumDriver

+

+

+class MyDownloader(RenderDownloader):

+ webdriver_pool: WebDriverPool = None

+

+ @property

+ def _webdriver_pool(self):

+ if not self.__class__.webdriver_pool:

+ self.__class__.webdriver_pool = WebDriverPool(

+ **setting.WEBDRIVER, driver=SeleniumDriver

+ )

+

+ return self.__class__.webdriver_pool

+

+ def download(self, request) -> Response:

+ # 代理优先级 自定义 > 配置文件 > 随机

+ if request.custom_proxies:

+ proxy = request.get_proxy()

+ elif setting.WEBDRIVER.get("proxy"):

+ proxy = setting.WEBDRIVER.get("proxy")

+ else:

+ proxy = request.get_proxy()

+

+ # user_agent优先级 自定义 > 配置文件 > 随机

+ if request.custom_ua:

+ user_agent = request.get_user_agent()

+ elif setting.WEBDRIVER.get("user_agent"):

+ user_agent = setting.WEBDRIVER.get("user_agent")

+ else:

+ user_agent = request.get_user_agent()

+

+ cookies = request.get_cookies()

+ url = request.url

+ render_time = request.render_time or setting.WEBDRIVER.get("render_time")

+ if request.get_params():

+ url = tools.joint_url(url, request.get_params())

+

+ browser: SeleniumDriver = self._webdriver_pool.get(

+ user_agent=user_agent, proxy=proxy

+ )

+ try:

+ browser.get(url)

+ if cookies:

+ browser.cookies = cookies

+ # 刷新使cookie生效

+ browser.get(url)

+

+ if render_time:

+ tools.delay_time(render_time)

+

+ html = browser.page_source

+ response = Response.from_dict(

+ {

+ "url": browser.current_url,

+ "cookies": browser.cookies,

+ "_content": html.encode(),

+ "status_code": 200,

+ "elapsed": 666,

+ "headers": {

+ "User-Agent": browser.user_agent,

+ "Cookie": tools.cookies2str(browser.cookies),

+ },

+ }

+ )

+

+ response.driver = browser

+ response.browser = browser

+ return response

+ except Exception as e:

+ self._webdriver_pool.remove(browser)

+ raise e

+

+ def close(self, driver):

+ if driver:

+ self._webdriver_pool.remove(driver)

+

+ def put_back(self, driver):

+ """

+ 释放浏览器对象

+ """

+ self._webdriver_pool.put(driver)

+

+ def close_all(self):

+ """

+ 关闭所有浏览器

+ """

+ self._webdriver_pool.close()

+```

+

+2. 在settings中指定下载器

+

+```

+RENDER_DOWNLOADER = "downloader.my_downloader.MyDownloader"

+```

+

+注,这里要写`RENDER_DOWNLOADER`

\ No newline at end of file

diff --git a/docs/source_code/pipeline.md b/docs/source_code/pipeline.md

index 14dd7455..6a04dbf1 100644

--- a/docs/source_code/pipeline.md

+++ b/docs/source_code/pipeline.md

@@ -2,11 +2,26 @@

Pipeline是数据入库时流经的管道,用户可自定义,以便对接其他数据库。

-框架已内置mysql及mongo管道,其他管道作为扩展方式提供,可从[feapder_pipelines](https://github.com/Boris-code/feapder_pipelines)项目中按需安装

+框架已内置mysql、mongo、csv管道,其他管道作为扩展方式提供,可从[feapder_pipelines](https://github.com/Boris-code/feapder_pipelines)项目中按需安装

项目地址:https://github.com/Boris-code/feapder_pipelines

-## 使用方式

+## 选择内置的pipeline

+

+在配置文件 `setting.py` 中的 `ITEM_PIPELINES` 中启用:

+

+```python

+ITEM_PIPELINES = [

+ "feapder.pipelines.mysql_pipeline.MysqlPipeline",

+ # "feapder.pipelines.mongo_pipeline.MongoPipeline",

+ # "feapder.pipelines.csv_pipeline.CsvPipeline",

+ # "feapder.pipelines.console_pipeline.ConsolePipeline",

+]

+```

+

+然后 爬虫中`yield`的`item`会流经选择的pipeline自动存储

+

+## 自定义pipeline

注:item会被聚合成多条一起流经pipeline,方便批量入库

diff --git a/docs/source_code/proxy.md b/docs/source_code/proxy.md

index b961ecf0..de87845a 100644

--- a/docs/source_code/proxy.md

+++ b/docs/source_code/proxy.md

@@ -1,12 +1,13 @@

# 代理使用说明

-代理使用有两种方式

-1. 用框架内置的代理池

-2. 自己写

+代理使用有三种方式

+1. 使用框架内置代理池

+2. 自定义代理池

+3. 请求中直接指定

-## 1. 框架内置的代理池

+## 方式1. 使用框架内置代理池

-### 基本使用

+### 配置代理

在配置文件中配置代理提取接口

@@ -14,9 +15,10 @@

# 设置代理

PROXY_EXTRACT_API = None # 代理提取API ,返回的代理分割符为\r\n

PROXY_ENABLE = True

+PROXY_MAX_FAILED_TIMES = 5 # 代理最大失败次数,超过则不使用,自动删除

```

-要求API返回的代理格式为:

+要求API返回的代理格式为使用 /r/n 分隔:

```

ip:port

@@ -26,13 +28,11 @@ ip:port

这样feapder在请求时会自动随机使用上面的代理请求了

-### 高阶

+## 管理代理

-> 注意:高阶用法现在不太友好,后期会调整使用方式

+1. 删除代理(默认是请求异常连续5次,再删除代理)

-1. 标记代理失效或延时使用

-

- 例如在发生异常时处理代理

+ 例如在发生异常时删除代理

```python

import feapder

@@ -44,49 +44,48 @@ ip:port

print(response)

def exception_request(self, request, response):

-

- # request.proxies_pool.tag_proxy(request.requests_kwargs.get("proxies"), -1) # 废弃本次代理

- request.proxies_pool.tag_proxy(request.requests_kwargs.get("proxies"), 1, 30) # 延迟本次代理30秒后再使用

- ```

-

-1. 指定代理拉取时间间隔等

-

- 在代码头部给feapder.Request.proxies_pool重新赋值

-

- ```python

- import feapder

- from feapder.network.proxy_pool import ProxyPool

-

- proxy_pool= ProxyPool(reset_interval_max=180, reset_interval=5)

- feapder.Request.proxies_pool = proxy_pool

+ request.del_proxy()

+

```

- 相当于修改了代理池的默认参数值,更多参数看源码

+## 方式2. 自定义代理池

-1. 从redis里提取代理

+1. 编写代理池:例如在你的项目下创建个my_proxypool.py,实现下面的函数

```python

- import feapder

- from feapder.network.proxy_pool import ProxyPool

-

- proxy_pool = ProxyPool(

- proxy_source_url="redis://:passwd@host:ip/db", redis_proxies_key="proxies"

- )

- feapder.Request.proxies_pool = proxy_pool

+ from feapder.network.proxy_pool import BaseProxyPool

+

+ class MyProxyPool(BaseProxyPool):

+ def get_proxy(self):

+ """

+ 获取代理

+ Returns:

+ {"http": "xxx", "https": "xxx"}

+ """

+ pass

+

+ def del_proxy(self, proxy):

+ """

+ @summary: 删除代理

+ ---------

+ @param proxy: xxx

+ """

+ pass

```

-

- 要求redis使用zset集合存储代理,存储内容示例如下:

+

+3. 修改setting的代理配置

+

```

- ip:port

- ip:port

- ip:port

+ PROXY_POOL = "my_proxypool.MyProxyPool" # 代理池

```

- redis_proxies_key及为存储代理的key,每次拉取时会拉取全量

+ 将编写好的代理池配置进来,值为类的模块路径,需要指定到具体的类名

+

+

-## 2. 自己写

+## 方式3. 不使用代理池,直接给请求指定代理

-自己写就比较灵活,自己随机取个代理,然后给request赋值即可,例如在下载中间件里使用

+直接给request.proxies赋值即可,例如在下载中间件里使用

```python

import feapder

@@ -96,7 +95,7 @@ class TestProxy(feapder.AirSpider):

yield feapder.Request("https://www.baidu.com")

def download_midware(self, request):

- # 这里随机取个代理使用即可

+ # 这里使用代理使用即可

request.proxies = {"https": "https://ip:port", "http": "http://ip:port"}

return request

diff --git "a/docs/source_code/\346\212\245\350\255\246\345\217\212\347\233\221\346\216\247.md" "b/docs/source_code/\346\212\245\350\255\246\345\217\212\347\233\221\346\216\247.md"

index 023bd06f..87dbc695 100644

--- "a/docs/source_code/\346\212\245\350\255\246\345\217\212\347\233\221\346\216\247.md"

+++ "b/docs/source_code/\346\212\245\350\255\246\345\217\212\347\233\221\346\216\247.md"

@@ -1,5 +1,7 @@

# 报警及监控

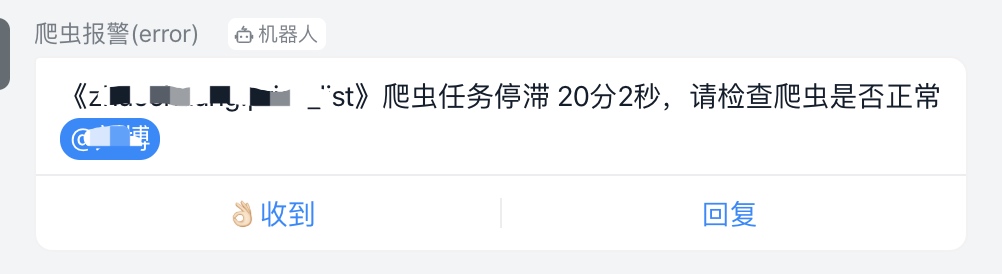

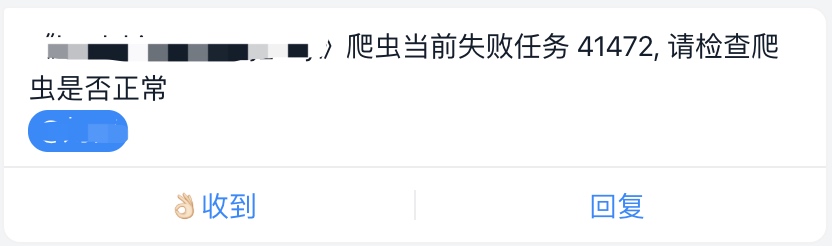

+支持钉钉、飞书、企业微信、邮件报警

+

## 钉钉报警

条件:需要有钉钉群,需要获取钉钉机器人的Webhook地址

@@ -10,15 +12,19 @@

+或使用加签方式,然后在setting中设置密钥

+

相关配置:

```python

# 钉钉报警

DINGDING_WARNING_URL = "" # 钉钉机器人api

DINGDING_WARNING_PHONE = "" # 报警人 支持列表,可指定多个

+DINGDING_WARNING_ALL = False # 是否提示所有人, 默认为False

+DINGDING_WARNING_SECRET = None # 加签密钥

```

-## 微信报警

+## 企业微信报警

条件:需要企业微信群,并获取企业微信机器人的Webhook地址

@@ -39,6 +45,17 @@ WECHAT_WARNING_PHONE = "" # 报警人 将会在群内@此人, 支持列表,

WECHAT_WARNING_ALL = False # 是否提示所有人, 默认为False

```

+## 飞书报警

+

+可参考文档设置机器人:https://open.feishu.cn/document/ukTMukTMukTM/ucTM5YjL3ETO24yNxkjN#e1cdee9f

+

+然后在feapder的setting文件中修改如下配置

+

+```

+FEISHU_WARNING_URL = "" # 飞书机器人api

+FEISHU_WARNING_USER = None # 报警人 {"open_id":"ou_xxxxx", "name":"xxxx"} 或 [{"open_id":"ou_xxxxx", "name":"xxxx"}]

+FEISHU_WARNING_ALL = False # 是否提示所有人, 默认为False

+```

## 邮件报警

@@ -69,6 +86,20 @@ EMAIL_RECEIVER = "" # 收件人 支持列表,可指定多个

4. 将本邮箱账号添加到白名单中

+## Qmsg酱报警

+

+Qmsg酱是一个QQ消息推送机器人,用来通知自己消息的免费服务。

+

+可以参考文档:https://qmsg.zendee.cn/docs/api/

+

+```python

+# QMSG报警

+QMSG_WARNING_URL = "" # qmsg机器人api

+QMSG_WARNING_QQ = "" # 指定要接收消息的QQ号或者QQ群。多个以英文逗号分割,例如:12345,12346,支持列表,可指定多人

+QMSG_WARNING_BOT = "" # 机器人的QQ号

+```

+

+

## 报警间隔及报警级别

框架会对相同的报警进行过滤,防止刷屏,默认的报警时间间隔为1小时,可通过以下配置修改:

diff --git "a/docs/source_code/\346\265\217\350\247\210\345\231\250\346\270\262\346\237\223-Playwright.md" "b/docs/source_code/\346\265\217\350\247\210\345\231\250\346\270\262\346\237\223-Playwright.md"

new file mode 100644

index 00000000..8483b126

--- /dev/null

+++ "b/docs/source_code/\346\265\217\350\247\210\345\231\250\346\270\262\346\237\223-Playwright.md"

@@ -0,0 +1,258 @@

+# 浏览器渲染-Playwright

+

+采集动态页面时(Ajax渲染的页面),常用的有两种方案。一种是找接口拼参数,这种方式比较复杂但效率高,需要一定的爬虫功底;另外一种是采用浏览器渲染的方式,直接获取源码,简单方便

+

+框架支持playwright渲染下载,每个线程持有一个playwright实例

+

+

+## 使用方式:

+

+1. 修改配置文件的渲染下载器:

+

+ ```

+ RENDER_DOWNLOADER="feapder.network.downloader.PlaywrightDownloader"

+ ```

+2. 使用

+

+ ```python

+ def start_requests(self):

+ yield feapder.Request("https://news.qq.com/", render=True)

+ ```

+

+在返回的Request中传递`render=True`即可

+

+框架支持`chromium`、`firefox`、`webkit` 三种浏览器渲染,可通过[配置文件](source_code/配置文件)进行配置。相关配置如下:

+

+```python

+PLAYWRIGHT = dict(

+ user_agent=None, # 字符串 或 无参函数,返回值为user_agent

+ proxy=None, # xxx.xxx.xxx.xxx:xxxx 或 无参函数,返回值为代理地址

+ headless=False, # 是否为无头浏览器

+ driver_type="chromium", # chromium、firefox、webkit

+ timeout=30, # 请求超时时间

+ window_size=(1024, 800), # 窗口大小

+ executable_path=None, # 浏览器路径,默认为默认路径

+ download_path=None, # 下载文件的路径

+ render_time=0, # 渲染时长,即打开网页等待指定时间后再获取源码

+ wait_until="networkidle", # 等待页面加载完成的事件,可选值:"commit", "domcontentloaded", "load", "networkidle"

+ use_stealth_js=False, # 使用stealth.min.js隐藏浏览器特征

+ page_on_event_callback=None, # page.on() 事件的回调 如 page_on_event_callback={"dialog": lambda dialog: dialog.accept()}

+ storage_state_path=None, # 保存浏览器状态的路径

+ url_regexes=None, # 拦截接口,支持正则,数组类型

+ save_all=False, # 是否保存所有拦截的接口, 配合url_regexes使用,为False时只保存最后一次拦截的接口

+)

+```

+

+ - `feapder.Request` 也支持`render_time`参数, 优先级大于配置文件中的`render_time`

+

+ - 代理使用优先级:`feapder.Request`指定的代理 > 配置文件中的`PROXY_EXTRACT_API` > webdriver配置文件中的`proxy`

+

+ - user_agent使用优先级:`feapder.Request`指定的header里的`User-Agent` > 框架随机的`User-Agent` > webdriver配置文件中的`user_agent`

+

+## 设置User-Agent

+

+> 每次生成一个新的浏览器实例时生效

+

+### 方式1:

+

+通过配置文件的 `user_agent` 参数设置

+

+### 方式2:

+

+通过 `feapder.Request`携带,优先级大于配置文件, 如:

+

+```python

+def download_midware(self, request):

+ request.headers = {

+ "User-Agent": "xxxxxxxx"

+ }

+ return request

+```

+

+## 设置代理

+

+> 每次生成一个新的浏览器实例时生效

+

+### 方式1:

+

+通过配置文件的 `proxy` 参数设置

+

+### 方式2:

+

+通过 `feapder.Request`携带,优先级大于配置文件, 如:

+

+```python

+def download_midware(self, request):

+ request.proxies = {

+ "https": "https://xxx.xxx.xxx.xxx:xxxx"

+ }

+ return request

+```

+

+## 设置Cookie

+

+通过 `feapder.Request`携带,如:

+

+```python

+def download_midware(self, request):

+ request.headers = {

+ "Cookie": "key=value; key2=value2"

+ }

+ return request

+```

+

+或者

+

+```python

+def download_midware(self, request):

+ request.cookies = {

+ "key": "value",

+ "key2": "value2",

+ }

+ return request

+```

+

+或者

+

+```python

+def download_midware(self, request):

+ request.cookies = [

+ {

+ "domain": "xxx",

+ "name": "xxx",

+ "value": "xxx",

+ "expirationDate": "xxx"

+ },

+ ]

+ return request

+```

+

+## 拦截数据示例

+

+> 注意:主函数使用run方法运行,不能使用start

+

+```python

+from playwright.sync_api import Response

+from feapder.utils.webdriver import (

+ PlaywrightDriver,

+ InterceptResponse,

+ InterceptRequest,

+)

+

+import feapder

+

+

+def on_response(response: Response):

+ print(response.url)

+

+

+class TestPlaywright(feapder.AirSpider):

+ __custom_setting__ = dict(

+ RENDER_DOWNLOADER="feapder.network.downloader.PlaywrightDownloader",

+ PLAYWRIGHT=dict(

+ user_agent=None, # 字符串 或 无参函数,返回值为user_agent

+ proxy=None, # xxx.xxx.xxx.xxx:xxxx 或 无参函数,返回值为代理地址

+ headless=False, # 是否为无头浏览器

+ driver_type="chromium", # chromium、firefox、webkit

+ timeout=30, # 请求超时时间

+ window_size=(1024, 800), # 窗口大小

+ executable_path=None, # 浏览器路径,默认为默认路径

+ download_path=None, # 下载文件的路径

+ render_time=0, # 渲染时长,即打开网页等待指定时间后再获取源码

+ wait_until="networkidle", # 等待页面加载完成的事件,可选值:"commit", "domcontentloaded", "load", "networkidle"

+ use_stealth_js=False, # 使用stealth.min.js隐藏浏览器特征

+ # page_on_event_callback=dict(response=on_response), # 监听response事件

+ # page.on() 事件的回调 如 page_on_event_callback={"dialog": lambda dialog: dialog.accept()}

+ storage_state_path=None, # 保存浏览器状态的路径

+ url_regexes=["wallpaper/list"], # 拦截接口,支持正则,数组类型

+ save_all=True, # 是否保存所有拦截的接口

+ ),

+ )

+

+ def start_requests(self):

+ yield feapder.Request(

+ "http://www.soutushenqi.com/image/search/?searchWord=%E6%A0%91%E5%8F%B6",

+ render=True,

+ )

+

+ def parse(self, reqeust, response):

+ driver: PlaywrightDriver = response.driver

+

+ intercept_response: InterceptResponse = driver.get_response("wallpaper/list")

+ intercept_request: InterceptRequest = intercept_response.request

+

+ req_url = intercept_request.url

+ req_header = intercept_request.headers

+ req_data = intercept_request.data

+ print("请求url", req_url)

+ print("请求header", req_header)

+ print("请求data", req_data)

+

+ data = driver.get_json("wallpaper/list")

+ print("接口返回的数据", data)

+

+ print("------ 测试save_all=True ------- ")

+

+ # 测试save_all=True

+ all_intercept_response: list = driver.get_all_response("wallpaper/list")

+ for intercept_response in all_intercept_response:

+ intercept_request: InterceptRequest = intercept_response.request

+ req_url = intercept_request.url

+ req_header = intercept_request.headers

+ req_data = intercept_request.data

+ print("请求url", req_url)

+ print("请求header", req_header)

+ print("请求data", req_data)

+

+ all_intercept_json = driver.get_all_json("wallpaper/list")

+ for intercept_json in all_intercept_json:

+ print("接口返回的数据", intercept_json)

+

+ # 千万别忘了

+ driver.clear_cache()

+

+

+if __name__ == "__main__":

+ TestPlaywright(thread_count=1).run()

+```

+可通过配置的`page_on_event_callback`参数自定义事件的回调,如设置`on_response`的事件回调,亦可直接使用`url_regexes`设置拦截的接口

+

+## 操作浏览器对象示例

+

+> 注意:主函数使用run方法运行,不能使用start

+

+```python

+import time

+

+from playwright.sync_api import Page

+

+import feapder

+from feapder.utils.webdriver import PlaywrightDriver

+

+

+class TestPlaywright(feapder.AirSpider):

+ __custom_setting__ = dict(

+ RENDER_DOWNLOADER="feapder.network.downloader.PlaywrightDownloader",

+ )

+

+ def start_requests(self):

+ yield feapder.Request("https://www.baidu.com", render=True)

+

+ def parse(self, reqeust, response):

+ driver: PlaywrightDriver = response.driver

+ page: Page = driver.page

+

+ page.type("#kw", "feapder")

+ page.click("#su")

+ page.wait_for_load_state("networkidle")

+ time.sleep(1)

+

+ html = page.content()

+ response.text = html # 使response加载最新的页面

+ for data_container in response.xpath("//div[@class='c-container']"):

+ print(data_container.xpath("string(.//h3)").extract_first())

+

+

+if __name__ == "__main__":

+ TestPlaywright(thread_count=1).run()

+```

\ No newline at end of file

diff --git "a/docs/source_code/\346\265\217\350\247\210\345\231\250\346\270\262\346\237\223.md" "b/docs/source_code/\346\265\217\350\247\210\345\231\250\346\270\262\346\237\223-Selenium.md"

similarity index 92%

rename from "docs/source_code/\346\265\217\350\247\210\345\231\250\346\270\262\346\237\223.md"

rename to "docs/source_code/\346\265\217\350\247\210\345\231\250\346\270\262\346\237\223-Selenium.md"

index ac728047..089f9537 100644

--- "a/docs/source_code/\346\265\217\350\247\210\345\231\250\346\270\262\346\237\223.md"

+++ "b/docs/source_code/\346\265\217\350\247\210\345\231\250\346\270\262\346\237\223-Selenium.md"

@@ -1,10 +1,10 @@

-# 浏览器渲染

+# 浏览器渲染-Selenium

采集动态页面时(Ajax渲染的页面),常用的有两种方案。一种是找接口拼参数,这种方式比较复杂但效率高,需要一定的爬虫功底;另外一种是采用浏览器渲染的方式,直接获取源码,简单方便

框架内置一个浏览器渲染池,默认的池子大小为1,请求时重复利用浏览器实例,只有当代理失效请求异常时,才会销毁、创建一个新的浏览器实例

-内置浏览器渲染支持 **CHROME** 、**PHANTOMJS**、**FIREFOX**

+内置浏览器渲染支持 **CHROME**、**EDGE**、**PHANTOMJS**、**FIREFOX**

## 使用方式:

@@ -14,7 +14,7 @@ def start_requests(self):

```

在返回的Request中传递`render=True`即可

-框架支持`CHROME`、`PHANTOMJS`、`FIREFOX` 三种浏览器渲染,可通过[配置文件](source_code/配置文件)进行配置。相关配置如下:

+框架支持`CHROME`、`EDGE`、`PHANTOMJS`、`FIREFOX` 三种浏览器渲染,可通过[配置文件](source_code/配置文件)进行配置。相关配置如下:

```python

# 浏览器渲染

@@ -24,7 +24,7 @@ WEBDRIVER = dict(

user_agent=None, # 字符串 或 无参函数,返回值为user_agent

proxy=None, # xxx.xxx.xxx.xxx:xxxx 或 无参函数,返回值为代理地址

headless=False, # 是否为无头浏览器

- driver_type="CHROME", # CHROME 、PHANTOMJS、FIREFOX

+ driver_type="CHROME", # CHROME、EDGE、PHANTOMJS、FIREFOX

timeout=30, # 请求超时时间

window_size=(1024, 800), # 窗口大小

executable_path=None, # 浏览器路径,默认为默认路径

@@ -73,16 +73,6 @@ def download_midware(self, request):

通过 `feapder.Request`携带,优先级大于配置文件, 如:

-```python

-def download_midware(self, request):

- request.proxies = {

- "http": "http://xxx.xxx.xxx.xxx:xxxx"

- }

- return request

-```

-

-或者

-

```python

def download_midware(self, request):

request.proxies = {

@@ -90,7 +80,7 @@ def download_midware(self, request):

}

return request

```

-

+

## 设置Cookie

通过 `feapder.Request`携带,如:

@@ -114,6 +104,21 @@ def download_midware(self, request):

return request

```

+或者

+

+```python

+def download_midware(self, request):

+ request.cookies = [

+ {

+ "domain": "xxx",

+ "name": "xxx",

+ "value": "xxx",

+ "expirationDate": "xxx"

+ },

+ ]

+ return request

+```

+

## 操作浏览器对象

通过 `response.browser` 获取浏览器对象

@@ -137,10 +142,10 @@ class TestRender(feapder.AirSpider):

browser.find_element_by_id("su").click()

time.sleep(5)

print(browser.page_source)

-

+

# response也是可以正常使用的

# response.xpath("//title")

-

+

# 若有滚动,可通过如下方式更新response,使其加载滚动后的内容

# response.text = browser.page_source

@@ -198,6 +203,7 @@ print("返回内容", xhr_response.content)

代码:

+

```python

import time

@@ -213,7 +219,7 @@ class TestRender(feapder.AirSpider):

user_agent=None, # 字符串 或 无参函数,返回值为user_agent

proxy=None, # xxx.xxx.xxx.xxx:xxxx 或 无参函数,返回值为代理地址

headless=False, # 是否为无头浏览器

- driver_type="CHROME", # CHROME、PHANTOMJS、FIREFOX